Security tools are often evaluated in isolation. But in a SOC environment, every tool is embedded in people, escalation paths, approval chains, and organizational politics. Many AI SOC claims sound implausible because those surrounding constraints are ignored.

At the same time, the reality facing modern SOCs is undeniable. Attack surfaces now span cloud, endpoint, identity, and SaaS. Adversaries already use automation and AI to move faster than human workflows allow. Alert volumes and attack tempo have crossed a threshold where manual operations cannot keep up. Automation is no longer optional. Once that point is accepted, most serious SOC leaders converge on a different question.

“Okay, but what should never be fully automated?”

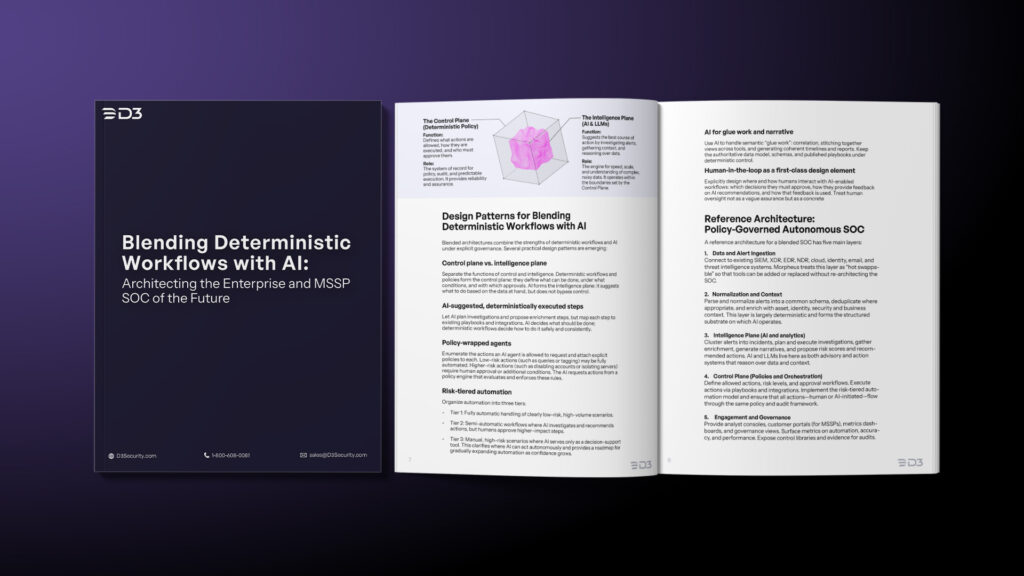

We argued in our white paper on blended deterministic and AI SOC architectures that this is a strategic question. Some SOC decisions should never execute without a human. The rest should not require one.

Not All SOC Decisions Are Created Equal

To decide what not to automate, you first need to categorize what you’re actually doing.

A typical SOC decision pipeline spans:

1. Detection – rules, models, signatures, behavioral analytics.

2. Triage & Investigation (L1/L2) – enrichment, correlation, timeline building, initial hypotheses.

3. Response & Remediation – containment, eradication, recovery, long-term fixes.

4. Communications & Governance – breach notifications, regulatory filings, customer updates, board reporting.

Each stage carries a different failure cost. Automating a hash reputation lookup is low-risk. Automating “disable all accounts in this OU (Organizational Unit)” is not. Automatically drafting a post-incident report is fine. Automatically sending it to regulators is something else entirely.

So the question “what should never be fully automated” really means something more precise.

Which decisions, under which conditions, should never execute without a human explicitly owning the outcome.

Four Criteria That Define the Boundary

From the white paper and conversations with practitioners, four criteria keep showing up:

1. Impact and Irreversibility

Ask: If this goes wrong, how bad is it, and how easy is it to undo?

Auto-closing a low-severity alert that you can re-open from logs? Probably fine if your triage false-negative rate is well understood. Isolating a critical production database or shutting down OT systems? That’s business-impacting and potentially safety-impacting.

Any action that is high-impact and hard to reverse should have stronger requirements: approvals, multi-party sign-off, or remain manual altogether.

2. Regulatory and Contractual Sensitivity

Certain SOC decisions trigger legal accountability rather than technical remediation. Breach notification thresholds, regulator filings, and customer disclosures all fall into this category.

AI can analyze evidence quickly and consistently. The final determination still carries legal responsibility that regulators expect to remain human. In these cases, AI is best used to prepare the decision, not to make it.

3. Ambiguity and “Unknown Unknowns”

Automating decisions is easier when data is rich and consistent, scenarios are well understood and repeatable, and the model’s behavior under edge cases is well tested.

But in high-ambiguity cases—new attack techniques, signals that don’t look like anything you’ve seen before, or conflicting evidence—AI’s confidence is less meaningful. The less familiar the scenario, the more value there is in a human steering the investigation.

4. Adversarial Manipulation Risk

Some decisions are easier for attackers to intentionally manipulate. Anything that reads external inputs (logs, emails, tickets, user reports) and maps them to high-impact actions can be abused via prompt injection or poisoning. LLM-based agents that read untrusted text and then call tools need extra scrutiny.

For those, you may choose to keep AI strictly advisory, require human review of both the AI’s reasoning and the evidence it used, and add specific guardrails and validation steps in deterministic workflows.

The Tiered Automation Model: A Practical Compromise

One practical way SOCs operationalize these boundaries is through tiered automation.

Tier 1 – Fully Automated

This tier covers low-risk, high-volume work. Known benign alerts, well-understood false positives, and enrichment tasks that do not change system state belong here. AI excels at clustering, correlating, and resolving this work quickly, while deterministic guardrails enforce fixed limits and auditability.

Tier 2 – Automated Investigation With Human Approval

This tier includes most meaningful security incidents. Suspicious identity activity, lateral movement, and anomalous data access often require containment that is disruptive but not existential. Here, AI performs the investigation end to end. It gathers evidence, builds timelines, correlates across tools, and recommends actions. Humans approve or modify those actions and provide feedback that improves future behavior.

Tier 3 – Human Decision With AI Support

Low-volume, high-impact incidents live here. Examples include suspected compromise of crown-jewel systems, incidents with regulatory or safety implications, and scenarios involving senior executives or cross-tenant blast radius. AI supports decision-making through summaries, correlation, and scenario analysis. Humans retain ownership of trade-offs, communications, and final execution.

Once teams adopt this model, the original question becomes easier to answer. Tier 3 decisions, along with Tier 2 actions that cross specific risk thresholds, should never run unattended.

Encoding the Boundary in Policy

It’s one thing to say “we’ll never automate high-impact actions,” and another to encode that into your stack. Practically, you want a policy engine that sits between AI agents and your tools.

Actions are defined in a catalog (e.g. quarantine endpoint, disable account, block IP, rotate keys, delete logs). Each action has attributes: risk level, approval requirements, allowed environments, per-tenant overrides. The AI can request actions; the policy engine decides whether to execute automatically, create an approval task, or deny and require manual handling.

This is where deterministic systems shine. Policies can be reviewed by risk, legal, and compliance teams, version-controlled and tested, and audited. AI operates inside these boundaries rather than redefining them on the fly.

Morpheus as an Example of Policy-Governed Autonomy

Different vendors are converging on this pattern in different ways. D3 Security’s Morpheus AI SOC is one example of what this can look like in practice.

Morpheus operates as an overlay above existing security tools, ingesting alerts from SIEM, XDR, EDR, cloud platforms, and SaaS environments. It uses a domain-specific investigative framework and LLMs to investigate every alert and triage around 95 percent in under two minutes in most environments. The engine generates full investigation workflows on the fly, but exposes them as a visible sequence of steps and evidence, so analysts can review and tune them. Actions are executed with governed guardrails: approvals, per-tenant isolation for MSSPs, and full audit trails.

In other words, Morpheus treats Tier 1 (and much of Tier 2) as automatable with supervision. It gives you a place to mark certain actions or conditions as “approval required” or “never auto,” and it keeps high-risk and high-ambiguity decisions in the human lane while doing most of the legwork to help humans make good calls quickly.

A Practical Test for Any AI SOC Program

If you’re planning or evaluating an AI SOC initiative, here’s a quick checklist to drive an honest conversation:

1. Define your tiers: What use cases belong in Tier 1, 2, and 3? Which actions are always Tier 3?

2. List your non-negotiables: Actions that must never be fully automated, and contexts where automation is always advisory-only.

3. Map to policies: Can you express those decisions in a policy engine the AI must go through, or are they trapped as tribal knowledge?

4. Validate with scenarios: Run tabletop exercises, including adversarial and edge-case scenarios.

5. Instrument and watch: Track override rates, false positives/negatives, and “automation regret” incidents, and adjust tiers and policies based on what you learn.

AI SOC Maturity Is Measured by Where Humans Step In

A mature AI SOC is defined by what it intentionally chooses not to automate, and having an architecture that enforces that line.

If you’d like a more structured framework for this, our white paper on blending deterministic workflows with AI goes deeper into tiered automation, policy design, and AI governance for both enterprises and MSSPs. Teams that understand where automation belongs, and where it does not, move faster without losing control. They let AI absorb volume and repetition, while humans retain ownership of judgment, accountability, and consequence.

That boundary is the real advantage.