What MSSP Customers Say About D3

“D3 is giving a much better possibility for our business to grow, so even though we are rapidly growing, we’ll be able to scale up to a lot more customers without adding more staff.”

—Philip Lyngø, Security & Analytics Manager, Trifork Security

“It was really on the focus of ingenuity, the integrations with other platforms that were commonplace within our clients’ technology stack, and the commitment to improving the technology so that we could have greater automation, greater integration, and just greater decisioning that we need to do as security analysts for our customers.”

—Tony UcedaVélez, CEO Founder, Versprite

Easy Automation for Growing MSSPs

Getting into automation as an MSSP might seem out of reach. It’s expensive and you need specialized knowledge to be able to get the most out of it. There are many possibilities and the guidance that companies provide isn’t tailored to your needs.

This is why D3 created and implemented the only playbook you need to easily serve your first 50 clients. With this single workflow, you can make the most out of your investigation and engineering teams, maximizing the number of clients each can serve.

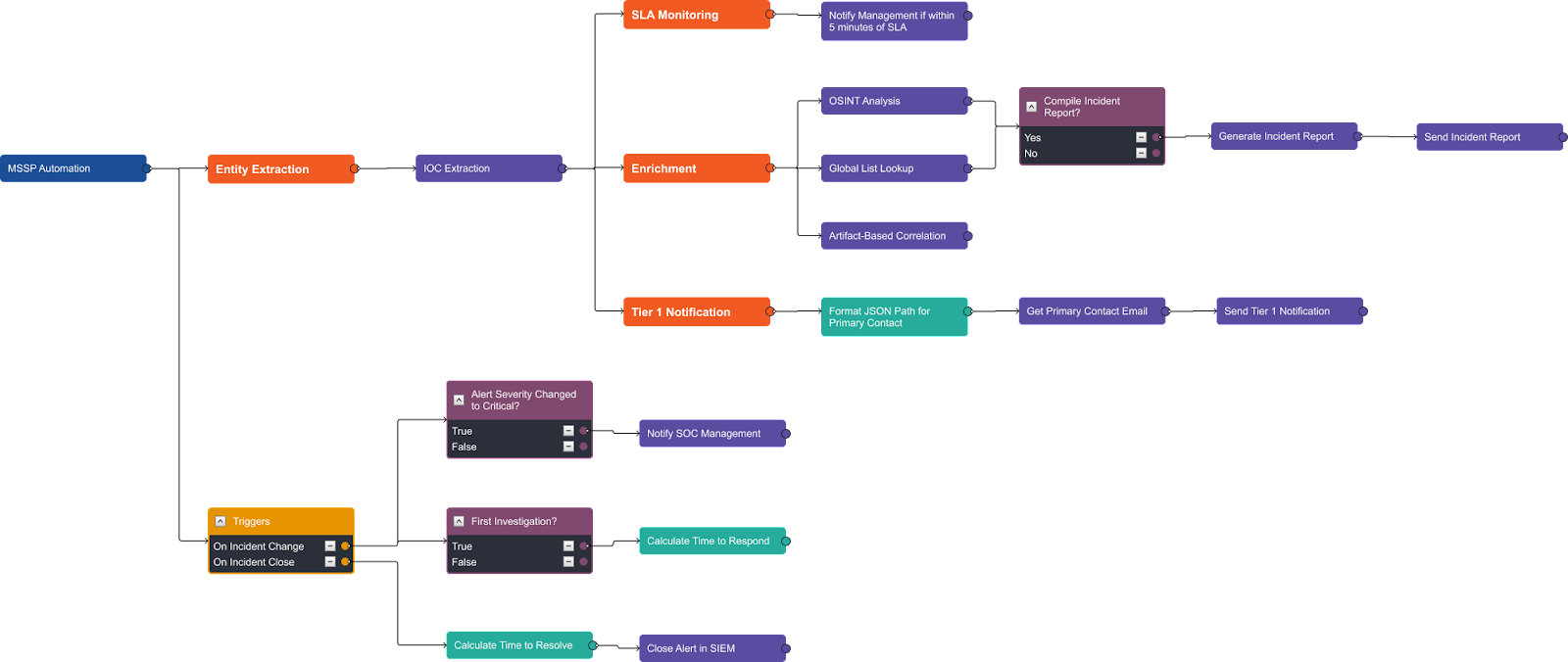

MSSP Master Playbook

High-Level Structure

The playbook has six main sections:

- IOC Extraction

- SLA Monitoring

- Enrichment & Correlation

- Reporting

- Tier 1 Notification

- KPI Tracking

We will go through each of these sections in detail.

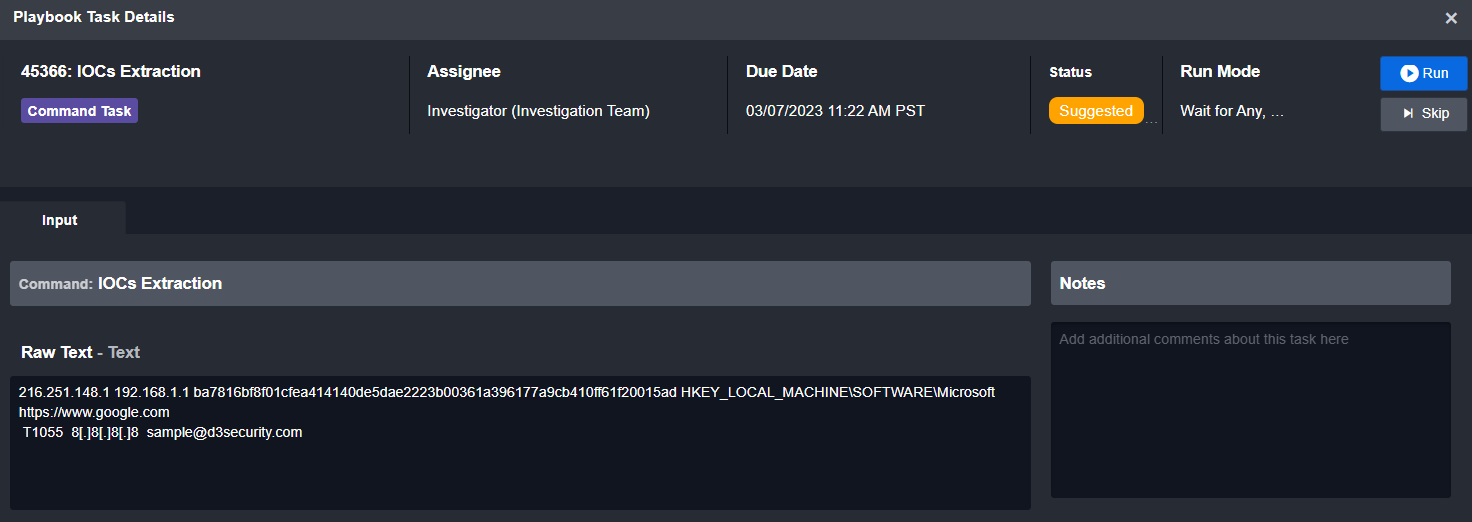

IOC Extraction

![]()

The first stage of all of D3’s automated workflows is normalization. This is especially important for MSSPs because you may be managing half a dozen different data sources, each with alerts that are structured differently. Without normalization, you will have to build a unique playbook for each data source.

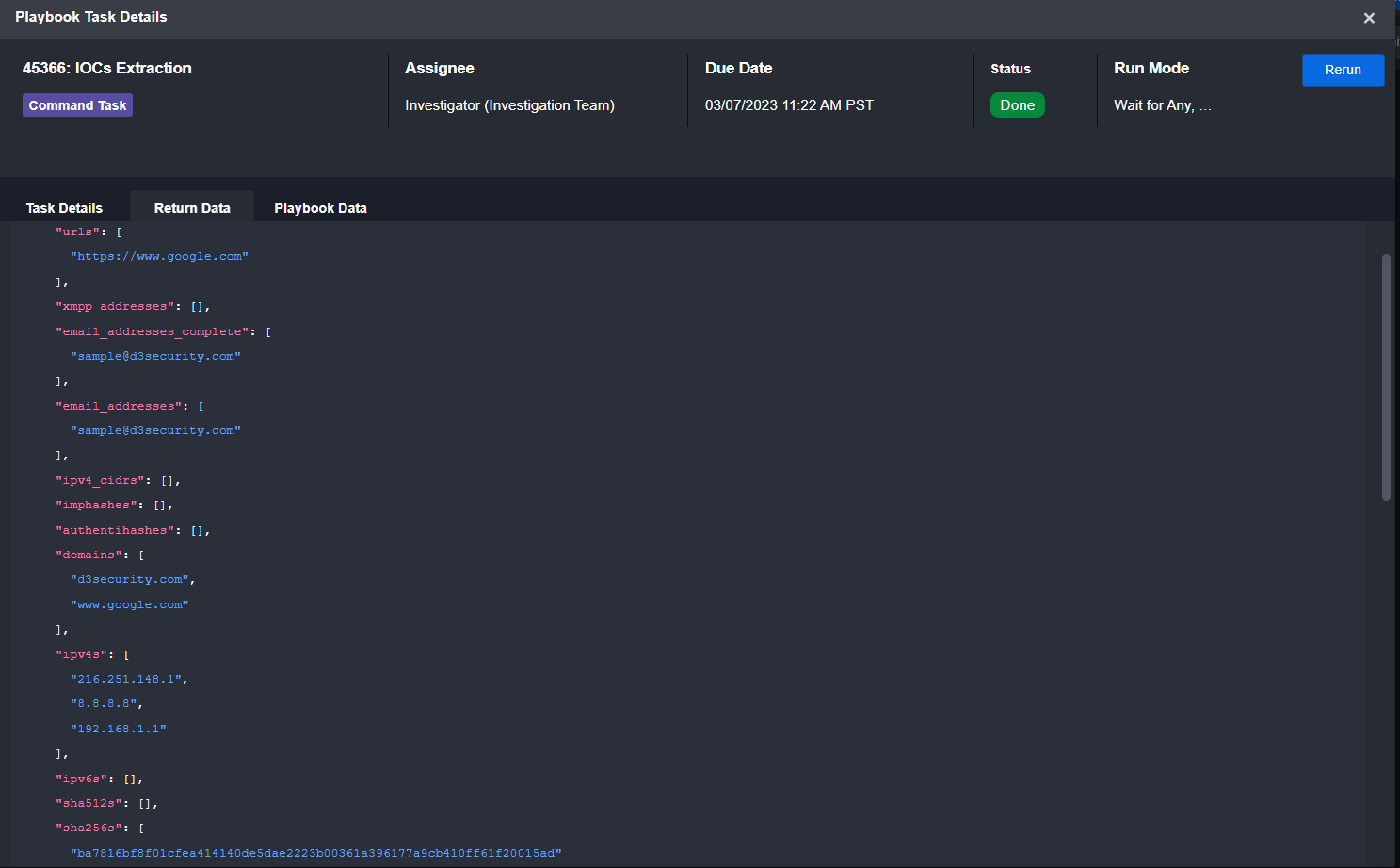

In the above workflow, we push all raw alert data through one of D3’s system commands, IOC Extraction, to pull out all IOCs and map them to standard fields.

Here is a sample of how the task takes raw input and outputs a standard JSON object:

Input

Output

SLA Monitoring

![]()

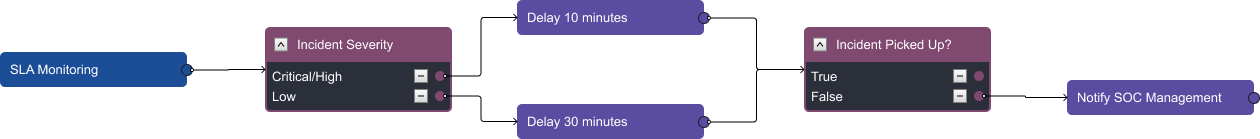

Your customers expect you to respond to incidents within a specific amount of time. Those times are generally dependent on the severity of the incident. Here, we have a nested playbook that reviews the incident severity and sets a timer according to your SLA. The time and severity can be easily customized. Here, we have an example where if the severity is critical or high, a timer will start for 10 minutes. If no one has picked up the ticket at that time it will notify SOC management, including providing the incident number and URL, so they can assign it to someone on shift.

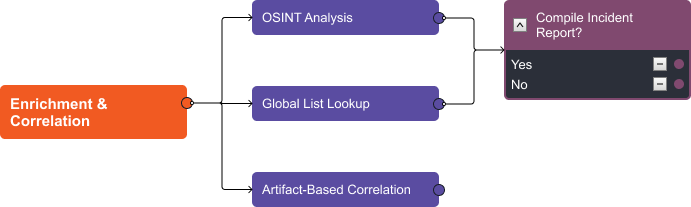

Enrichment & Correlation

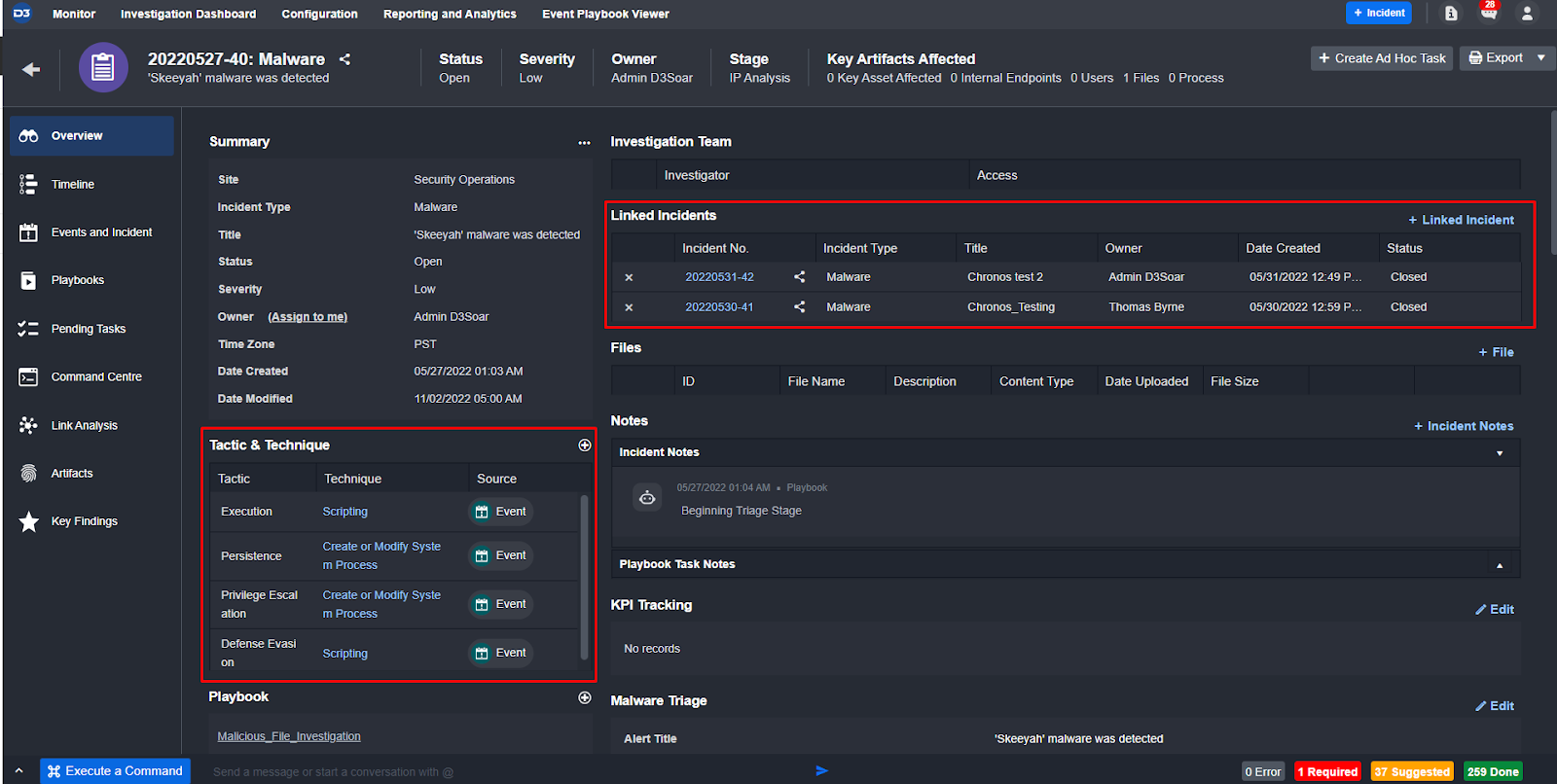

Every incident created in D3 and run through this playbook will have enrichment and correlation executed on its artifacts. The playbook uses OSINT libraries to search for the reputations of URLs, file hashes, IPs, and domains found in the alert. Then, it summarizes the results and presents them to the analyst to review. At the same time, this workflow checks D3 global lists for allow/block lists and asset values that indicate a higher or lower priority. Finally, it searches through D3’s local database to find other incidents with matching artifacts and links them together in the incident overview.

Here, you can see all the information automatically gathered and enriched by the playbook:

MITRE TTPs & Related Incidents

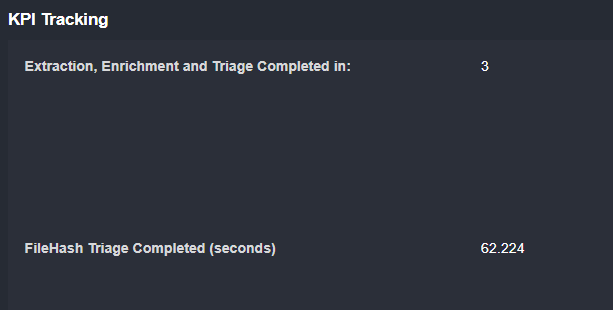

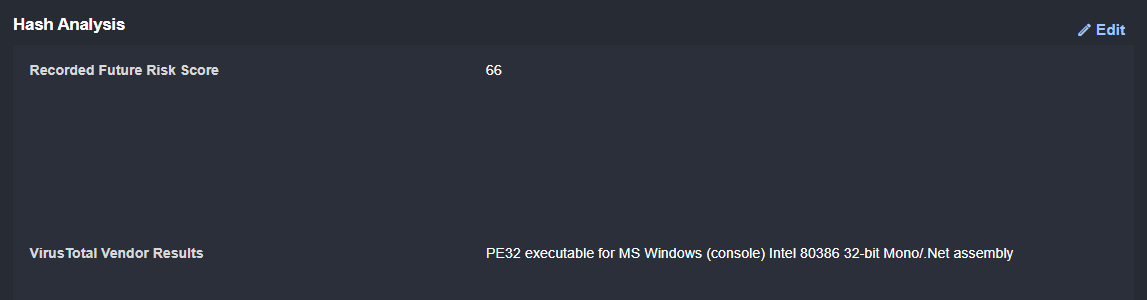

KPI Tracking

OSINT Results

Once the analyst has reviewed the information, they can decide if a customized incident report should be generated and sent to the client.

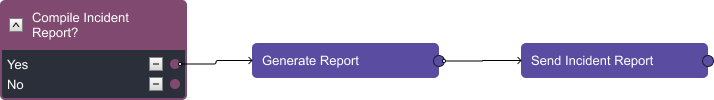

Reporting

A significant portion of your team’s time may be spent writing incident reports. This is expected, as you can charge it as a higher tier service. With D3, you can automatically export any incident into a custom report, as shown below.

You can customize any client-facing documentation such as this one with your own branding.

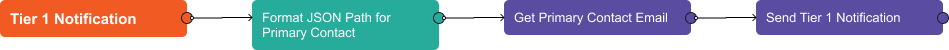

Tier 1 Notification

Many of our customers are required to send a notification to their clients if an incident has specific artifacts or severity. With this playbook, you can populate and send this email automatically.

The playbook searches the alert data for the client’s company name, then gets their primary contact email from a D3 global list. Then, the email is populated in the final task, including incident details such as severity and artifacts.

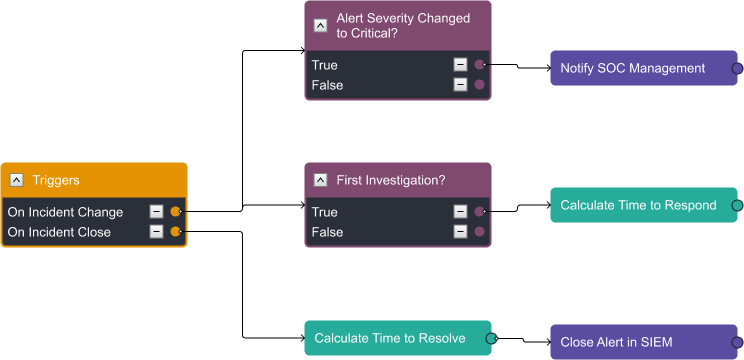

KPI Tracking

Tracking time to resolve and time to respond are key for both management and client relations. This playbook is designed to automatically track when the incident is assigned to an analyst and then when it’s closed. These can be included in the incident report and tier 1 notifications described above.

Additionally, after the time to resolve is calculated, MSSPs often ask us to close the related alert in the SIEM or original data source that generated it.

Implementation

Now the big question: “How difficult is this to implement?”

We regularly deploy this workflow and get to go-live for our MSSP clients within four weeks of signing the deal. The implementation is split into four phases:

- Preparation (1 week)

- Execution (2 weeks)

- User Acceptance Testing (UAT) (1 week)

- Go-Live

In the preparation phase, the focus is ingesting your data into your dev platform. This data could be from your SIEM, EDR, email, or other sources. Once the data in dev has been okayed by your team, we move to phase two.

Execution is where the D3 Engineers will adapt the MSSP playbook to your data sources. This means making sure that the alerts are normalized, the enrichment is running successfully, the primary contact addresses are being pulled correctly, and more. This process takes approximately one week to complete. Then, we create your production site and prepare it for UAT in week four.

UAT is where your team will review the workflows and send any final requests before go-live.

Then, data ingestion is turned on in production and your team is up and running.

Book a call with us to answer any of your questions about this workflow and how D3 works with MSSPs to improve efficiency and enable them to scale their business.