How D3 Morpheus helps MSSPs serve AI-forward and AI-cautious clients from a single multi-tenant platform.

Executive Summary

MSSPs face a structural inefficiency: their client portfolios contain fundamentally conflicting risk profiles. While some organizations demand AI-driven automation to reduce mean-time-to-respond (MTTR), regulated sectors—including finance, healthcare, and government—often enforce strict policies prohibiting AI involvement in security decision-making.

This heterogeneity forces MSSPs into a binary choice. Legacy SOAR platforms (Torq, Tines, Palo Alto XSOAR, Swimlane) lack tenant-level AI governance, compelling providers to either enforce a single operational standard across all clients or fragment their operations across multiple platform instances. Both approaches degrade margins and scalability.

D3 Morpheus resolves this architectural gap through three specific capabilities:

Table of Contents

- The MSSP Challenge: One Platform, Many Policies

- The D3 Morpheus MSSP Architecture

- Client Segmentation in Practice

- Phased Implementation Model: Moving Clients from Deterministic to Autonomous

- Competitive Analysis: Architectural Constraints at Scale

- The Business Case: Unit Economics of the AI-Ready MSSP

- Market Outlook: The Necessity of Mixed-Mode Governance

- Partner with D3 Security

The MSSP Challenge: One Platform, Many Policies

The primary obstacle to MSSP scalability is non-uniformity. Differing client AI policies now act as hard operational constraints on service delivery models.

The Spectrum of Client AI Readiness

AI-Prohibited (Regulated)

Organizations bound by internal governance or external compliance (e.g., GDPR, HIPAA) that forbids non-deterministic decision-making. These environments require auditable, rule-based playbook execution with zero AI variance.

AI-Cautious (Transitional)

Organizations seeking efficiency gains but restricted by risk aversion. These clients typically authorize AI pilots for high-volume, low-impact alerts (e.g., phishing) while mandating traditional workflows for critical assets.

AI-Forward (Autonomous)

Organizations prioritizing speed and scale. These clients require full AI-driven triage to maximize SOC throughput and minimize analyst overhead.

An effective MSSP must serve all three profiles from a single operational platform, without the complexity and cost of maintaining separate environments.

Why Traditional SOAR Platforms Fail MSSPs

Legacy SOAR platforms were not designed for this reality. Their limitations compound at MSSP scale:

Global-Only AI Configuration

Platforms like Torq, Tines, XSOAR, and Swimlane do not support toggling AI capabilities at the tenant or playbook level. AI features, where they exist at all, are platform-wide settings. A MSSP cannot isolate AI-driven logic to specific tenants, making it impossible to serve regulated and non-regulated clients from a single instance.

Weak or absent multi-tenancy

True multi-tenant architecture with isolated, independently configurable tenant environments is not a core strength of legacy SOAR platforms. MSSPs frequently resort to separate instances per client or crude tenant separation that limits configuration granularity.

Integration maintenance scales linearly with clients

Every additional client tenant means additional integrations to maintain. When APIs change, the MSSP must update connectors across every affected tenant. At scale, this maintenance burden becomes the dominant operational cost—consuming resources that should be spent on security delivery.

Rigid Service Tiers

MSSPs cannot offer AI-cautious clients a measured transition because the underlying platforms do not support mixed-mode operation. The MSSP’s service tiers are limited by the platform’s rigidity.

The D3 Morpheus MSSP Architecture

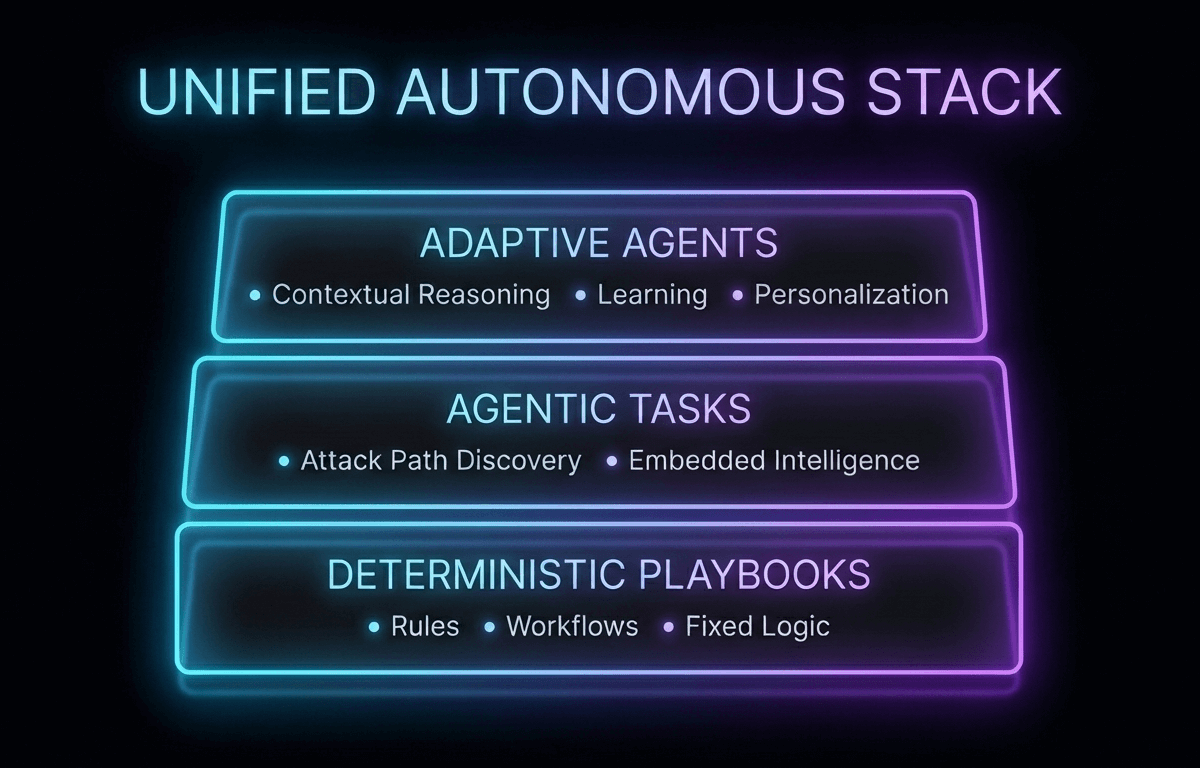

D3 Morpheus resolves the operational conflicts of MSSP delivery through three integrated architectural capabilities.

Multi-Tenant Segmentation & Policy Isolation

Morpheus utilizes a native multi-tenant architecture that enforces logical isolation between client environments. Unlike legacy platforms where AI is a global setting, Morpheus defines AI configuration as a tenant-level attribute.

This allows an MSSP to run conflicting operational models simultaneously within a single instance:

Tenant A (Financial Services): Deterministic Execution

The tenant is configured to block all AI-driven tasks. All playbooks execute pre-defined logic paths. This satisfies strict regulatory requirements for auditable, human-confirmed decision-making.

Tenant B (Healthcare): Hybrid Execution

AI-driven Attack Path Discovery enabled on phishing and credential-abuse playbooks. Traditional playbooks maintained for endpoint alerts involving clinical systems. The tenant permits AI-driven tasks for specific high-volume vectors (e.g., phishing) while enforcing deterministic logic for sensitive assets (e.g., clinical endpoints).

Tenant C (Technology): Autonomous Execution

The tenant is configured for full AI triage. The platform autonomously correlates data and maps attack paths across all alert types to maximize SOC throughput with Morpheus’ Attack Path Discovery enabled on all playbooks. The client receives maximum autonomous SOC capability.

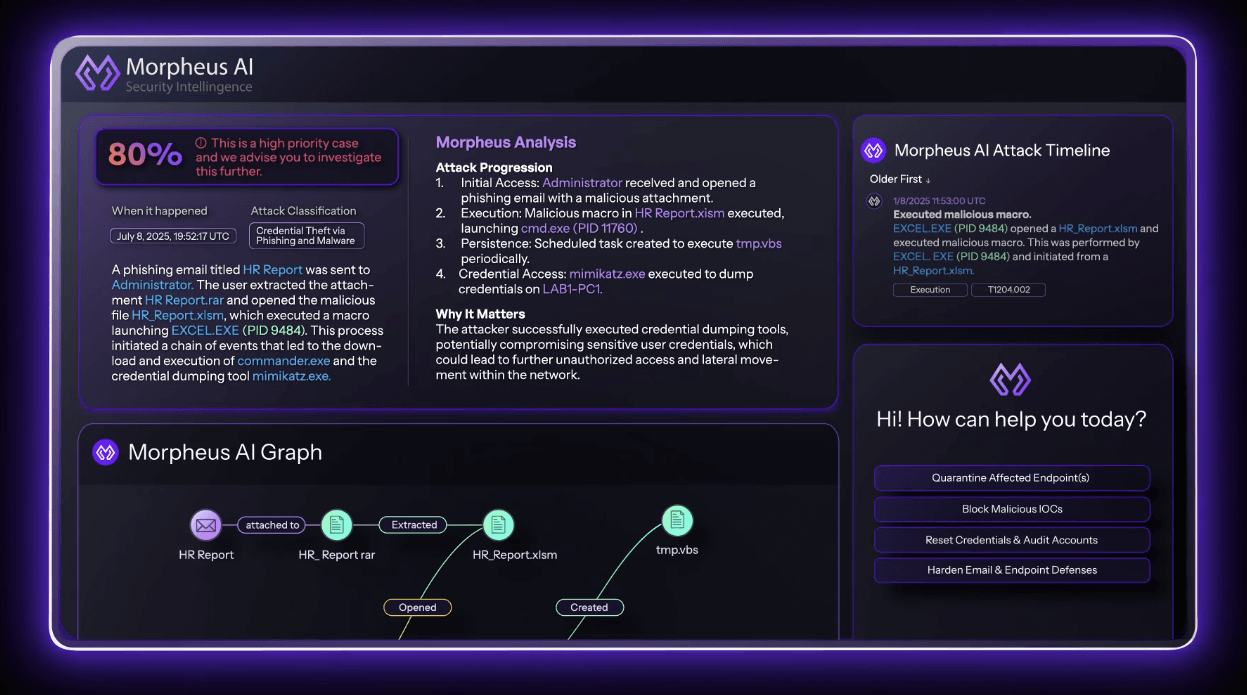

Granular AI Control: The Attack Path Discovery Mechanism

Within each tenant, the AI configuration operates at the individual playbook level through D3’s Attack Path Discovery task. This mechanism gives MSSPs precise control over where AI operates for each client:

This task functions as a conditional gate for the AI engine:

Included

The platform ingests the alert, autonomously queries integrated tools, builds a link graph of the attack, and determines severity.

Excluded

The platform bypasses the AI engine entirely and executes standard SOAR workflows.

This per-playbook granularity is unique to Morpheus and is the architectural feature that enables three distinct, billable service tiers:

Managed SOAR (Deterministic)

Standard playbook automation for compliance-focused clients.

Hybrid AI Response

Selected playbooks configured with Attack Path Discovery based on client preferences. AI handles noise reduction (Tier 1); analysts handle complex investigations (Tier 2/3). Suitable for AI-cautious clients who want to experiment gradually.

Full AI SOC Service

All playbooks configured with Attack Path Discovery. The MSSP delivers full Autonomous AI SOC operations. Suitable for AI-forward clients seeking maximum efficiency.

The MSSP can offer these as tiered service packages, and clients can upgrade from one tier to the next without migration, platform changes, or operational disruption.

Operational Scalability: Self-Healing Integrations

In standard SOAR deployments, integration maintenance correlates linearly with tenant count. A typical MSSP client tenant connects to 15–30 security tools: SIEM, EDR, email security, identity providers, threat intelligence feeds, ticketing systems, cloud security platforms. An MSSP with 50 clients may manage 750–1,500 integration connections. A single API change from a vendor can break connectors across 50+ isolated tenants, triggering a high volume of support tickets and engineering operational expense (OpEx).

On traditional SOAR platforms, this is the single largest operational cost driver. It consumes engineering hours, creates client-facing incidents when playbooks break, and limits the number of clients an MSSP can effectively manage. It is the primary reason MSSP SOAR margins are thinner than they should be.

Morpheus’s self-healing integrations eliminate this at the architectural level:

- Continuous monitoring. Morpheus monitors the health and behavior of every integration across every tenant, API responses, schema changes, endpoint modifications, authentication status.

- Autonomous remediation. When a change is detected, Morpheus automatically adapts the affected connectors. The update propagates across all tenants that use the affected integration.

- Zero client impact. Playbooks continue executing without interruption. The MSSP’s clients experience no service degradation from integration drift.

Client Segmentation in Practice

The following matrix demonstrates how a single Morpheus instance supports divergent operational models simultaneously. All configurations utilize shared infrastructure while enforcing strict logical isolation between tenants.

| Tenant Profile | Configuration Strategy | Operational Outcome |

|---|---|---|

| Regulated Financial | Deterministic Mode: All playbooks execute pre-defined logic. Attack Path Discovery tasks are disabled globally for this tenant | Compliance Assurance: 100% auditable execution path. Zero probabilistic decision-making. Maintenance overhead handled via self-healing integrations. |

| Healthcare Provider | Hybrid Mode: AI tasks enabled for high-volume ingress vectors (e.g., Phishing). Deterministic workflows retained for clinical endpoints and sensitive data. | Risk-Adjusted Efficiency: Automated noise reduction for non-critical alerts; human-in-the-loop control for HIPAA-regulated assets. |

| Technology Enterprise | Autonomous Mode: Attack Path Discovery enabled on all ingestion pipelines. Full AI-driven triage and correlation active. | Throughput Maximization: Analyst resources reallocated from Tier 1 triage to high-value investigations and threat hunting. |

| Government Agency | Parallel Execution: Network anomaly playbooks utilize Attack Path Discovery; all other categories remain deterministic. | Empirical Validation: Side-by-side comparison of AI vs. traditional outputs to establish performance baselines for future expansion. |

Phased Implementation Model: Moving Clients from Deterministic to Autonomous

Beyond static segmentation, Morpheus provides a technical framework for migrating clients up the automation curve. This capability allows MSSPs to onboard risk-averse organizations and incrementally validate AI performance without platform migration.

The MSSP-Guided Adoption Framework

Baseline Configuration (Deterministic SOAR)

Deploy the tenant with standard, rule-based playbooks. The client operates under a traditional managed service model. Integration maintenance is automated via self-healing connectors, establishing immediate margin improvement over legacy SOAR delivery.

Targeted Pilot (High-Volume/Low-Risk)

Select a single high-volume alert category (typically Phishing or Credential Abuse). Enable the Attack Path Discovery task for this specific playbook. Run AI-driven triage in parallel with existing workflows to generate comparative performance data.

Quantitative Efficacy Analysis

Review pilot data with the client after a defined window (e.g., 30 days). Metrics should focus on: False Positive Reduction Rate, Mean Time to Triage (MTTT) improvement, and accuracy of AI-generated correlations vs. manual analyst findings.

Iterative Expansion

Upon validation, enable AI tasks for additional playbook categories sequentially. This granular control allows for reversible decisions: if performance degrades in a specific category, the playbook can be instantly reverted to deterministic mode without impacting other services.

Service Tier Elevation

As the percentage of AI-handled alerts increases, the client is migrated to higher-value service tiers (e.g., from “Managed SOAR” to “Autonomous SOC”).

This framework functions as a technical upsell mechanism. Operational efficiency gains are shared with the client through improved SLAs, justifying higher service fees while reducing the MSSP’s cost-to-serve.

Competitive Analysis: Architectural Constraints at Scale

| MSSP Capability | D3 Morpheus | Torq / Tines | XSOAR | Swimlane |

|---|---|---|---|---|

| Multi-Tenant Architecture | Native | Limited | Add-on | Limited |

| Per-Client AI/Traditional Toggle | Yes | No | No | No |

| Per-Playbook AI Configuration | Yes | No | No | No |

| Self-Healing Integrations | Autonomous | Manual | Manual | Manual |

| Client-Level AI Adoption Path | Gradual per client | Global only | N/A | N/A |

| Integration Maintenance (at scale) | Eliminated | Scales linearly | Scales linearly | Scales linearly |

| Regulatory Compliance Segmentation | Per-tenant policy | Manual separation | Manual separation | Manual separation |

The “Global Policy” Limitation

The “Linear Scaling” Trap

The Business Case: Unit Economics of the AI-Ready MSSP

Deploying a platform that decouples client volume from engineering overhead fundamentally alters the MSSP P&L.

OpEx Reduction

- Single platform for all client profiles. Eliminate the cost and complexity of maintaining separate environments for different client types.

- Self-healing integrations at scale. Recapture the 30–40% of operational time currently consumed by integration maintenance across client tenants.

- Reduced onboarding friction. New clients deploy on the same platform regardless of their AI readiness, with configuration rather than migration determining their service model.

Margin Expansion

- Tiered AI service offerings. Create differentiated service packages, Traditional SOAR, Hybrid AI, and Full AI SOC, each at increasing price points, all delivered from the same platform.

- Built-in upsell path. The gradual AI adoption framework creates a natural progression from lower to higher service tiers, with each upgrade justified by demonstrated AI performance.

- Competitive differentiation. Offer prospective clients what no competitor can: a single platform that respects their current AI policies while providing a clear, low-risk path to AI-driven operations when they are ready.

Client Retention

- No forced AI adoption. Clients never feel pressured to adopt AI before they are ready. The MSSP’s value proposition works regardless of where the client sits on the AI readiness spectrum.

- Continuous value demonstration. As AI-cautious clients pilot and expand AI triage, the MSSP can present quantitative evidence of improvement—reinforcing the client relationship and justifying continued investment.

- Platform stickiness. Once a client has built playbooks, configured integrations, and begun an AI adoption journey within Morpheus, the switching cost to a competing platform is substantial.

Market Outlook: The Necessity of Mixed-Mode Governance

The MSSP market is bifurcating. Providers restricted by legacy architectures must force all clients into a single operational box: either fully manual or fully automated, limiting their total addressable market (TAM).

D3 Morpheus offers the only architecture designed for operational heterogeneity. By enforcing strict tenant isolation while enabling granular AI adoption, it allows MSSPs to service the entire risk spectrum—from the AI-prohibited to the AI-autonomous—from a single, scalable infrastructure.

Partner with D3 Security

D3 Security offers dedicated MSSP partnership programs with volume licensing, shared deployment support, and co-marketing resources.

Contact the D3 MSSP team to schedule a multi-tenant demonstration and explore partnership opportunities.