A New Category Defined: The Unified Intelligence Model (UIM)

The Unified Intelligence Model is the security operations architecture in which a single purpose-built cybersecurity LLM performs complete autonomous investigation of every security alert, correlating all relevant telemetry from all integrated tools simultaneously in a unified context window, from alert ingestion through response recommendation, without inter-agent handoffs, context fragmentation, or coordination overhead, producing a single contiguous reasoning chain and a bespoke response playbook per investigation. D3 Security coins this term to give security leaders precise vocabulary for evaluating AI SOC architectures and for asking vendors the questions that reveal production performance.

Executive Summary

The security operations industry is in the middle of a naming war. “Agentic SOC,” “multi-agent SOC,” “AI-native SOAR,” “AI SOC analyst” each term describes a different answer to the same underlying problem: manual alert triage is broken, the global cybersecurity workforce shortage is structural, and legacy Security Orchestration, Automation and Response (SOAR) platforms built on static playbooks have hit their ceiling. The terminology a buyer uses to describe what they need shapes which architectures they evaluate and which trade-offs they accept.

When “agentic SOC” becomes the dominant buying vocabulary, buyers default to evaluating multi-agent architectures, even when those architectures introduce structural failure modes that a unified intelligence approach eliminates by design. This paper introduces the Unified Intelligence Model (UIM) as a precisely defined term with testable properties, giving security leaders a vocabulary that enables accurate architectural comparison.

The UIM in one sentence: A security operations architecture in which a single purpose-built cybersecurity LLM performs complete autonomous investigation from alert ingestion through response recommendation, correlating all evidence simultaneously in a unified context window, without inter-agent handoffs, with a single contiguous audit trail, and with native capabilities for threat intelligence hunting, vulnerability response planning, and proactive threat management, maintained by Self-Healing Integrations that solve API drift autonomously across 800+ connected tools.

D3 Security’s Morpheus AI is the first and only platform to fully implement all four UIM pillars at production scale. The 24-month development investment, 60-specialist team, proprietary Attack Path Discovery framework, and Self-Healing Integration architecture represent a capability gap that cannot be closed by assembling general-purpose models through an orchestration layer. This paper demonstrates why and gives security leaders the framework to verify the claim in any vendor evaluation.

Table of Contents

- The Orchestration Tax: Why Coordination Is the Problem, Not the Solution

- Defining the Unified Intelligence Model (UIM)

- The Four Pillars of the Unified Intelligence Model

- Attack Path Discovery: The UIM in Practice

- UIM Beyond Triage: Threat Intel, Vulnerability Response, and Proactive Hunting

- Why the 24-Month Development Investment Cannot Be Shortcut

- Unified Audit Trail, Pricing, and Compliance Architecture

- A Decision Framework for Security Leaders

- Measured Outcomes, FAQ, and Next Steps

- See the Unified Intelligence Model in Production

1. The Orchestration Tax: Why Coordination Is the Problem, Not the Solution

Agent orchestration in security operations is architecturally appealing because it mirrors how human teams organize complex work: divide the problem into specializations, assign each to an expert, coordinate the outputs. The problem is that AI agents cannot replicate the judgment properties that make human specialization effective. They process whatever input they receive, without knowing what was lost in the handoff, without being able to ask upstream agents for the original raw data, and without the contextual judgment to recognize when a summary is insufficient.

Every coordination layer in a multi-agent system imposes what D3 Security defines as the orchestration tax: the cumulative cost in latency, signal fidelity, adversarial attack surface, audit complexity, API maintenance burden, and pricing unpredictability that accrues from routing investigation work through multiple coordinated agents rather than performing it within a single unified system.

Latency Tax

Each inter-agent handoff introduces queuing delay and serialization overhead. Under 4,400+ daily alerts, pipeline latency compounds. Attackers achieve lateral movement in under 4 minutes. The latency tax costs SOC teams the containment window.

Fidelity Tax

Each agent summarizes its output for the next. Signal fidelity degrades at every handoff. Living-off-the-land attacks, multi-stage campaigns, and identity-based threats require the full raw evidence that summaries lose.

API Drift Tax

Each agent’s static connectors break when vendor APIs change. The same structural problem that made legacy SOAR unsustainable. 50 tools × 4–6 updates/year = integration disruptions every 6 weeks. This affects every agent in the pipeline separately.

Error Amplification Tax

Upstream agent hallucinations are treated as ground truth by downstream agents. What would be an isolated error in a single model becomes a multi-agent consensus finding.

Audit Opacity Tax

Compliance evidence spans 5+ agent logs and requires cross-system reconstruction under tight NIS2/DORA/SEC timelines, at exactly the moment when engineering resources are consumed by incident response.

Cost Unpredictability Tax

Multi-agent pipelines tokenize data once per agent, duplicating token charges across 5 pipeline stages. Usage-based billing makes investigation cost unpredictable, creating coverage vs. budget tension during incidents.

The orchestration tax is not a product of poor engineering. It is a fundamental property of any system that distributes investigation work across coordinated components. Eliminating it requires eliminating the orchestration: consolidating investigation intelligence within a single unified model that performs complete analysis without coordination.

2. Defining the Unified Intelligence Model (UIM)

Unified Intelligence Model (UIM): Formal Definition

A security operations architecture in which a single purpose-built cybersecurity LLM performs complete autonomous investigation of every security alert, correlating all relevant telemetry from all integrated tools simultaneously in a unified context window, from alert ingestion through response recommendation, without inter-agent handoffs, context fragmentation, or coordination overhead, producing a single contiguous reasoning chain and a bespoke response playbook per investigation. Self-Healing Integrations maintain operational integrity across the connected tool ecosystem autonomously.

UIM Compared to Adjacent Architectures

| Architecture | Defining Property | Why It Is Not UIM | Example Vendors (2026) |

|---|---|---|---|

| Legacy SOAR | Static playbooks authored by SOAR engineers execute predefined logic. | No AI-driven investigation. Logic in the playbook, not the model. SOAR architect dependency unchanged. | Splunk SOAR, Palo Alto XSOAR, IBM QRadar SOAR (legacy) |

| AI-Augmented SOAR | General-purpose LLM interface added to a legacy SOAR engine. Speeds playbook authoring. | Static playbooks still required. AI assists workflow builders; does not investigate autonomously. SOAR architect dependency preserved. | ServiceNow SecOps with AI, Microsoft Sentinel Copilot on SOAR, Tines + LLM |

| Multi-Agent / Agentic SOC | Multiple specialized AI agents coordinate to handle discrete investigation functions. | Coordination overhead. Context fragmentation. Hallucination propagation. API drift per agent. Fragmented audit trail. Usage-based billing. | Dropzone AI, Stellar Cyber Socrates, ReliaQuest GreyMatter, Cyware |

| Unified Intelligence Model (UIM) | Single purpose-built cybersecurity LLM. Complete investigation in one inference pass. No agent coordination. | This IS UIM. All four pillars must be demonstrable in production at production scale. | D3 Morpheus AI (sole current full UIM implementation at production scale) |

Why Named Concepts Create Accountability

D3 Security’s “Attack Path Discovery” became a citable reference point that forced competitors to situate their products relative to D3’s named capability. The Unified Intelligence Model serves the same function at the architectural level. A named concept with formal definition and testable properties creates a verification framework. Buyers can ask any vendor whether their architecture qualifies, and the four pillars in Section 3 provide a specific audit checklist for the answer.

3. The Four Pillars of the Unified Intelligence Model

A platform claiming to implement the UIM must demonstrate all four pillars in a production environment. The absence of any one means the platform is a multi-agent or AI-augmented architecture with UIM marketing, lacking UIM implementation authenticity.

| Pillar | Definition | How to Verify in a PoC | Morpheus AI Implementation |

|---|---|---|---|

| Pillar 1: Single Inference Context | Complete investigation from alert ingestion through response recommendation occurs within a single model’s context window. All relevant telemetry from all integrated tools present simultaneously. No summarization handoffs. | Demonstrate that the correlation stage has access to the full raw telemetry from the detection stage, not a summary of it. Ask to see the context window contents during a live investigation. | APD framework holds complete evidence from all 800+ integrated tools in one context window. No handoffs. Single inference pass per investigation. |

| Pillar 2: Purpose-Built Domain LLM | The LLM is trained specifically on cybersecurity data: attack patterns, MITRE ATT&CK TTPs, kill chain progressions, investigation methodologies, and SOC decision workflows. Not a fine-tuned general-purpose model. | Ask for training data composition and development timeline. A general-purpose LLM with a security-themed system prompt or 6-month fine-tune does not qualify. Request attack simulation accuracy data. | 24-month development by 60 specialists (red teamers, data scientists, SOC analysts, AI engineers). Purpose-built from the ground up. Customer-expandable. Attack simulation validates accuracy before every release. |

| Pillar 3: Contiguous Audit Trail | Every investigation produces a single, complete, human-readable reasoning chain from triggering event through every correlation step to the final response recommendation in one system of record. No reconstruction required for compliance review. | After a complex multi-stage investigation, ask the vendor to produce the complete reasoning chain from one system view, not correlated from multiple agent logs. Time the production of a NIS2 72-hour notification package. | Every investigation produces a single-system evidence chain. Native compliance packages for NIS2, DORA, SEC 8-K, cyber insurance. SOC 2 Type II certified. |

| Pillar 4: Autonomous Self-Maintenance | The platform maintains its own integration ecosystem. When vendor APIs change, the platform detects the change, analyzes its semantic meaning, regenerates integration code, validates it, and deploys the correction without engineering tickets or visibility gaps. | Ask for a documented example of an API change being detected and repaired autonomously. Ask for time-from-detection-to-restored-functionality, measured in a production environment. | Self-Healing Integrations: drift detected in minutes, semantic analysis and code regeneration automated, operation restored in hours. Documented across 800+ integrations. No manual remediation required. |

4. Attack Path Discovery: The UIM in Practice

Attack Path Discovery (APD) is Morpheus AI’s named implementation of the UIM’s core investigation methodology. APD is the operating principle of the entire architecture, not a feature added to a platform. Every alert triggers a complete APD investigation automatically, without static playbooks, without SOAR architects, and without manual correlation.

North–South (Vertical) Correlation

Deep inspection into the alert’s origin tool: process trees and parent-child execution chains, registry keys and file system telemetry, tool-specific behavioral artifacts, and temporal sequencing before and after the trigger. Answers: what exactly happened inside the tool that generated this alert?

East–West (Horizontal) Correlation

Simultaneous correlation across the full security stack: EDR, SIEM, identity systems, cloud security logs, network monitoring, DLP, email security, and threat intelligence feeds, all in the same context window at the same time. Answers: where did this threat come from, where is it going, and what has it already touched?

APD Output: What Every Investigation Produces

| Output | Description | Why It Matters |

|---|---|---|

| Complete Attack Timeline | Chronological reconstruction of every event from initial indicator through all discovered stages, with tool, timestamp, and relationship annotations. | Analyst sees the entire attack path without building it manually. Reviewable and correctable. |

| Full Reasoning Chain | Every inference the LLM made: every data point considered, every correlation drawn, all in transparent, human-readable form. | Every reasoning step is reviewable, editable, and overridable. No black boxes. Compliance-ready evidence. |

| MITRE ATT&CK Mapping | Automatic mapping of discovered attack stages to MITRE ATT&CK and MITRE D3FEND techniques. | Enables detection engineering follow-up. Red team exercise targeting. Gap analysis. |

| Bespoke Response Playbook | A contextual response playbook generated at runtime from the specific evidence found, tailored to the target, the tool stack, and the organization’s SOC preferences. Not a static template selected from a library. | Response actions reflect what actually happened, not what a playbook author anticipated might happen. |

| Incident Response Priority Score (IRPS) | A composite severity score weighing impact, confidence, context, and existing containment, boiling down the full investigation to an at-a-glance urgency ranking. | Analysts focus immediately on highest-priority confirmed threats. No manual severity triage required. |

Why contextual playbooks matter: Static playbooks are designed by humans who anticipate future threats. Contextual playbooks are generated by a model that has already investigated the specific threat it is responding to. The difference in response relevance is proportional to the difference between anticipating a threat and investigating it.

5. UIM Beyond Triage: Threat Intel, Vulnerability Response, and Proactive Hunting

The UIM’s APD framework is not a triage-only capability. The same unified correlation architecture that investigates incoming alerts also enables high-value proactive use cases that extend Morpheus AI’s value across the full SOC mission without additional agents, additional orchestration layers, or additional integration maintenance.

| Use Case | How Morpheus AI APD Handles It | Advantage Over Multi-Agent Alternatives |

|---|---|---|

| Threat Intelligence Ingestion & Environmental Hunting | Morpheus AI ingests third-party threat intelligence feeds (Recorded Future, CrowdStrike Falcon Intel, ISAC feeds, and others). When a new IOC set or TTP profile is ingested, the APD framework automatically runs the indicators across the customer’s full environment, querying every integrated tool for evidence of those specific behaviors. Output: a structured environmental investigation report and a prioritized response playbook for any confirmed matches. | Multi-agent alternatives require additional specialized agents or manual analyst query authoring. Each additional agent requires its own API integrations, API drift maintenance, and orchestration layer additions. In Morpheus AI, threat intel hunting is a native APD query, no additional architecture. |

| Vulnerability Scanner Integration & Response Planning | Morpheus AI ingests findings from Tenable, Qualys, Rapid7, and other scanners. The APD framework assesses whether each vulnerability has been exploited in the environment, which assets are at actual risk given the organization’s network topology and tool stack, and what the realistic attack path from that vulnerability looks like. Output: a context-aware response playbook prioritized by actual environmental risk, not CVSS score alone. | Multi-agent vulnerability response requires an additional Vulnerability Assessment Agent integrated to the scanner API, plus orchestration to the existing triage pipeline. Every additional agent compounds the API drift exposure. In Morpheus AI, vulnerability ingestion is a data source addition, not an architectural addition. |

| Proactive Threat Hunting | Analysts describe a suspected attack pattern in natural language. Morpheus AI generates contextual queries across all integrated tools, correlates results via the APD framework, and presents findings as a unified investigation report, not disconnected query outputs across 5–15 separate consoles. | Multi-agent hunting requires either a dedicated Hunting Agent or manual analyst work across tool consoles. Morpheus AI uses the same APD framework with analyst-directed hypotheses. |

| Incident Response & Forensics | For confirmed incidents requiring deep forensic investigation, Morpheus AI applies full APD to reconstruct the complete attack timeline, correlating backward across the full tool stack, identifying patient-zero endpoints, tracing lateral movement, and producing a compliance-ready evidence package. | Multi-agent forensics requires the full pipeline to run in reverse-lookup mode, which was not its designed direction. Context fragmentation that degrades triage quality also degrades forensic reconstruction quality. |

6. Why the 24-Month Development Investment Cannot Be Shortcut

The most important strategic fact about the Unified Intelligence Model is also the one most frequently underestimated: a purpose-built cybersecurity LLM cannot be assembled from parts. It cannot be approximated by fine-tuning a general-purpose model on security vocabulary. It cannot be built in six months. It cannot be created by connecting multiple specialized models through an orchestration layer and calling the result “unified.”

The depth of domain knowledge required to reason correctly about attack progression, distinguishing malicious PowerShell from legitimate administration, linking 72-hour-apart attack stages as one campaign, correlating across identity and network boundaries, is acquired through sustained purpose-specific training or not at all.

What the Assembly Fallacy Gets Wrong

Multi-agent architectures often use specialized models for individual pipeline stages: a fine-tuned model for detection, a RAG-augmented model for enrichment, a general-purpose model for response generation. Assembling specialized models connected by coordination layers reintroduces the orchestration tax in a different form. The value of unified intelligence comes from a single model that understands the entire investigation domain simultaneously, not from coordination of models that each understand fragments of it.

The Self-Healing Integration Advantage: Solving API Drift Permanently

D3 Security’s Self-Healing Integrations use a specialized LLM trained on security data schemas from hundreds of vendor products. When a vendor API changes, this LLM analyzes the semantic meaning of the change, understanding that a field renamed from “threat_score” to “risk_level” carries equivalent meaning and regenerates the integration connector code automatically. The process: drift detected in minutes → semantic analysis → code generation → three-stage validation (syntactic, semantic, integration) → deployment. Total time: hours. No engineering tickets. No visibility gaps.

Customer-Expandable Intelligence as Competitive Moat

Organizations deploying Morpheus AI can expand and customize the LLM for their specific environment, adding proprietary detection context, organization-specific SOC procedures, and environment-specific asset criticality mappings. This customization becomes an organization-owned intellectual asset that improves over time and grows increasingly difficult for attackers to evade. It is not a vendor-controlled black box. Every step of the reasoning is transparent, reviewable, editable, and overridable by analysts.

7. Unified Audit Trail, Pricing, and Compliance Architecture

Compliance Architecture: Native Output, Not Reconstruction

In the Unified Intelligence Model, compliance evidence is native investigation output, not a post-hoc reporting layer. Every Morpheus AI investigation produces a complete chain from a single system of record: the triggering event, the data analyzed, the full reasoning chain, the response recommendation, and the analyst approval or override record.

| Regulatory Requirement | Multi-Agent System Challenge | Morpheus AI (UIM) Native Output |

|---|---|---|

| NIS2 — 72-Hour Notification | Reasoning spans 5 agent logs. Cross-system reconstruction under 72-hour deadline during active incident response. | Complete single-system investigation record available immediately. Root cause in the reasoning chain. No reconstruction required. |

| DORA — 4-Hour Initial Report | “Detection time” may differ across agent system clocks. Incident type classification may conflict between agents. | Single system timestamp. APD classification is the authoritative incident type. All fields from one system. |

| SEC 8-K — 4 Business Days | Scope determination from Investigation Agent may conflict with Correlation Agent findings. No authoritative single source. | Scope visible in APD output from one system. No inter-agent conflicts to resolve. |

| Cyber Insurance Claims | Carriers require AI decision audit trails. Multi-system reconstruction may leave gaps or contradictions. | Complete AI reasoning chain with analyst confirmation at every autonomous decision point. Deterministic/indeterministic record shows human oversight. |

Pricing Architecture: Flat Subscription, No Usage Fees

Morpheus AI is priced as a platform subscription plus user licenses. There are no per-alert charges, no token fees, no investigation caps, and no usage-based components. D3 Security absorbs all LLM compute costs internally. The APD framework’s contextual query design (analyzing alert context first to determine what tools to query, rather than querying all tools for every alert) reduces per-investigation token consumption, allowing D3 to offer flat pricing at scale.

- Full investigation coverage during breach incidents: no cost pressure to throttle automation when volume spikes

- Threat intel hunting, vulnerability response, and proactive threat hunting included: no per-use charges for extended capabilities

- Predictable annual spend: regardless of alert volume fluctuations

- No per-token charges: for threat intel ingestion and environmental hunting queries

D3 Security publishes current subscription pricing at d3security.com/morpheus/pricing. Pricing reflects the full Morpheus AI platform including all APD capabilities, Self-Healing Integrations, contextual playbook generation, threat intel ingestion, and vulnerability response planning.

D3 absorbs all LLM compute costs internally. Morpheus AI’s APD framework uses precise contextual queries rather than broad LLM requests. The model first assesses what data is relevant before querying, which controls token consumption per investigation and is what makes flat pricing viable. Agentic systems that issue broad, undirected queries to the LLM at each pipeline stage cannot predict their per-alert token cost. This is structurally why they pass that cost to customers as usage fees.

Free legacy SOAR migration program available. See d3security.com/legacy-soar-migration-program

8. A Decision Framework for Security Leaders

The following framework helps security leaders select the architecture that best fits their specific operational context. Evaluate each criterion against your organization’s profile.

| Evaluation Criterion | Multi-Agent SOC Fit | Unified Intelligence Model (Morpheus AI) Fit |

|---|---|---|

| Daily alert volume | Under 1,000/day: pipeline latency may be acceptable in PoC. Over 4,000/day: coordination latency risk escalates materially. | Optimized for high volume. Single inference pass maintains consistent sub-2-minute latency regardless of concurrent investigation count. |

| Attack sophistication | Known, categorically distinct attacks: specialized agents perform well. Novel, multi-stage, living-off-the-land attacks: context fragmentation creates coverage gaps. | Purpose-built LLM trained on novel patterns and multi-stage kill chains. No handoff gaps in East-West correlation. Handles living-off-the-land techniques accurately. |

| Tool stack size and update frequency | Small, stable stack (under 10 tools, infrequent updates): manageable API drift exposure. Large, heterogeneous (50+ tools, frequent updates): per-agent API drift compounds to SOAR-level maintenance burden. | Self-Healing Integrations maintain 800+ tools autonomously. API drift solved permanently regardless of stack size or vendor update frequency. |

| Compliance requirements | Low: acceptable. NIS2/DORA/SEC with tight notification timelines: audit trail reconstruction creates compliance risk under pressure. | Full compliance architecture built into native investigation output. Single system of record. Recommended for all regulatory environments. |

| Security team size | Large teams with dedicated integration maintenance capacity may manage multi-agent systems. Small teams cannot absorb the per-agent maintenance overhead. | Zero integration maintenance overhead. Small teams (3–10 analysts) operate at full capability without dedicated integration engineers. |

| Use case breadth | Alert triage only: multi-agent may be adequate. Threat intel hunting, vulnerability response, proactive hunting, IR forensics: each requires additional agents, orchestration, and maintenance. | All extended use cases are native APD capabilities. No additional architecture or agents required. |

| Budget predictability | Usage-based billing creates unpredictable annual cost at high volume or during breach incidents. | Flat subscription. Predictable annual spend regardless of alert volume, investigation count, or extended use case activity. |

9. Measured Outcomes, FAQ, and Next Steps

Frequently Asked Questions

What is the Unified Intelligence Model?

A security operations architecture where a single purpose-built cybersecurity LLM performs complete autonomous investigation covering all correlation, all evidence, one context window, one reasoning chain, one audit trail without inter-agent handoffs or coordination overhead. D3 Security coined this term in March 2026 to give security leaders precise vocabulary for architectural evaluation.

How does Morpheus AI differ from an agentic SOC platform?

An agentic SOC routes investigation work through multiple coordinated agents with discrete scopes. Morpheus AI performs complete investigation within a single unified model, eliminating coordination latency, context fragmentation, API drift per agent, hallucination propagation, audit trail fragmentation, and token cost duplication, all structural properties of multi-agent architectures.

Can Morpheus AI ingest third-party threat intelligence and hunt across my environment?

Yes. Morpheus AI ingests threat intelligence feeds and runs indicators and TTPs across your full environment via the APD framework. The output is a structured environmental investigation report and a prioritized response playbook for any confirmed matches. This is a native APD capability, not an additional agent or module.

Can Morpheus AI ingest vulnerability scanner findings?

Yes. Morpheus AI ingests findings from Tenable, Qualys, Rapid7, and other scanners. The APD framework produces context-aware prioritization and a response playbook that accounts for your specific environment, not CVSS score ranking.

How does Morpheus AI handle API changes from integrated vendors?

Self-Healing Integrations detect API drift within minutes, analyze the semantic meaning of the change, regenerate integration code autonomously, validate it against both old and new data formats, and restore full operation in hours, with no engineering tickets. This solves the API drift problem that both legacy SOAR and multi-agent systems inherit through static connectors.

What does Morpheus AI cost?

Flat subscription plus user licenses. No per-alert, per-token, or per-investigation fees. D3 absorbs all LLM compute costs. Current pricing: d3security.com/pricing. Legacy SOAR customers can migrate at no transition cost via the SOAR Migration Program.

See the Unified Intelligence Model in Production

Request a technical demonstration that tests all four UIM pillars against your environment. Bring the PoC testing framework from Whitepaper 2. D3 will demonstrate single inference context, purpose-built LLM accuracy, contiguous audit trail, and Self-Healing integration repair, all with production data.

The Agentic SOC Debate

d3security.com/resources/agentic-soc-debate/

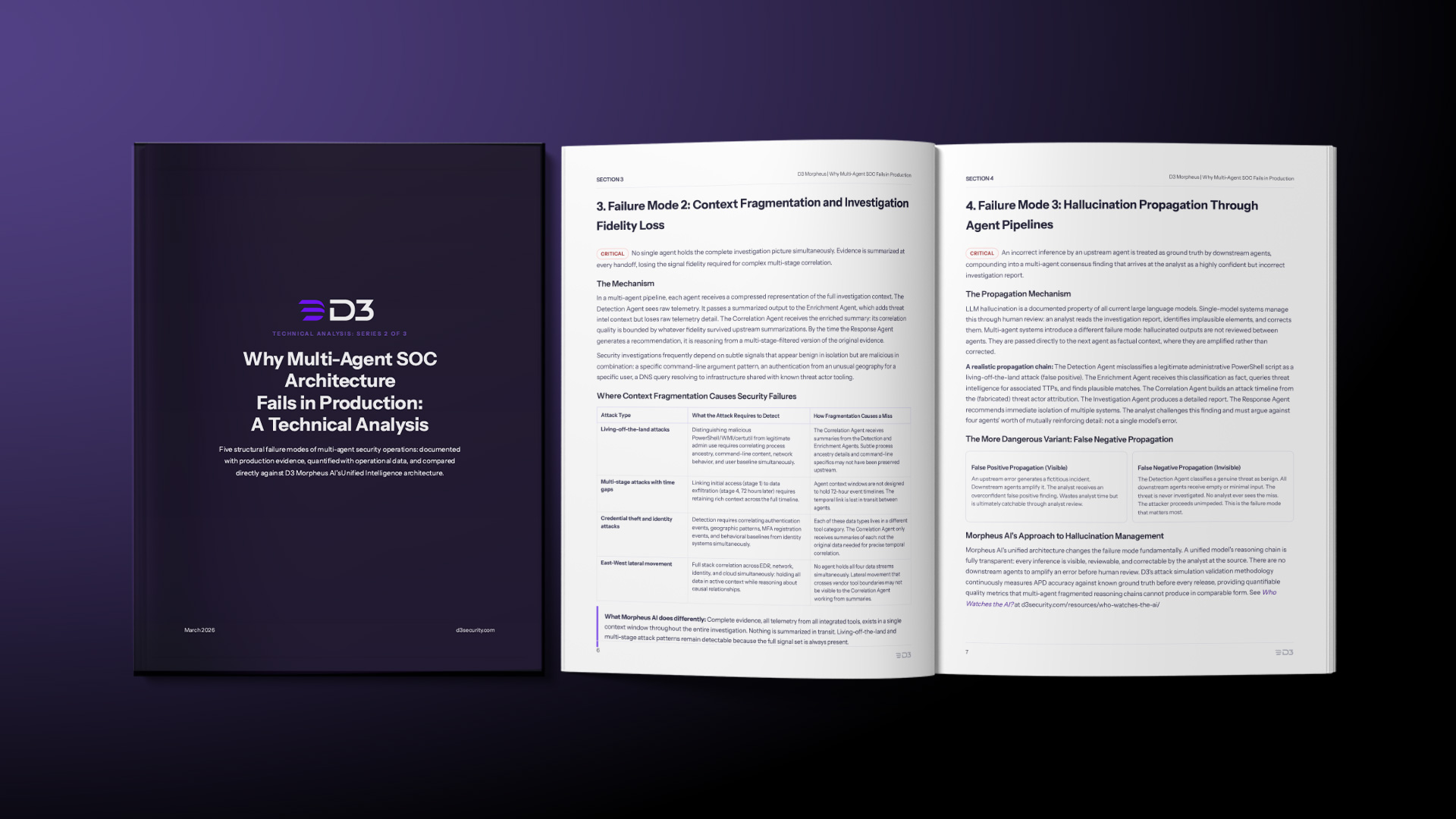

Why Multi-Agent SOC Architecture Fails in Production

d3security.com/resources/multi-agent-soc-risks/

Beyond Agentic: The Unified Intelligence Model (this paper)

d3security.com/resources/unified-intelligence-soc-model/