For over a decade, the SOAR model has been straightforward: hire specialized architects, build playbooks for every alert type, and maintain them as the threat landscape evolves. It brought repeatability and speed to security operations. It was the right model for its time.

But that time has passed.

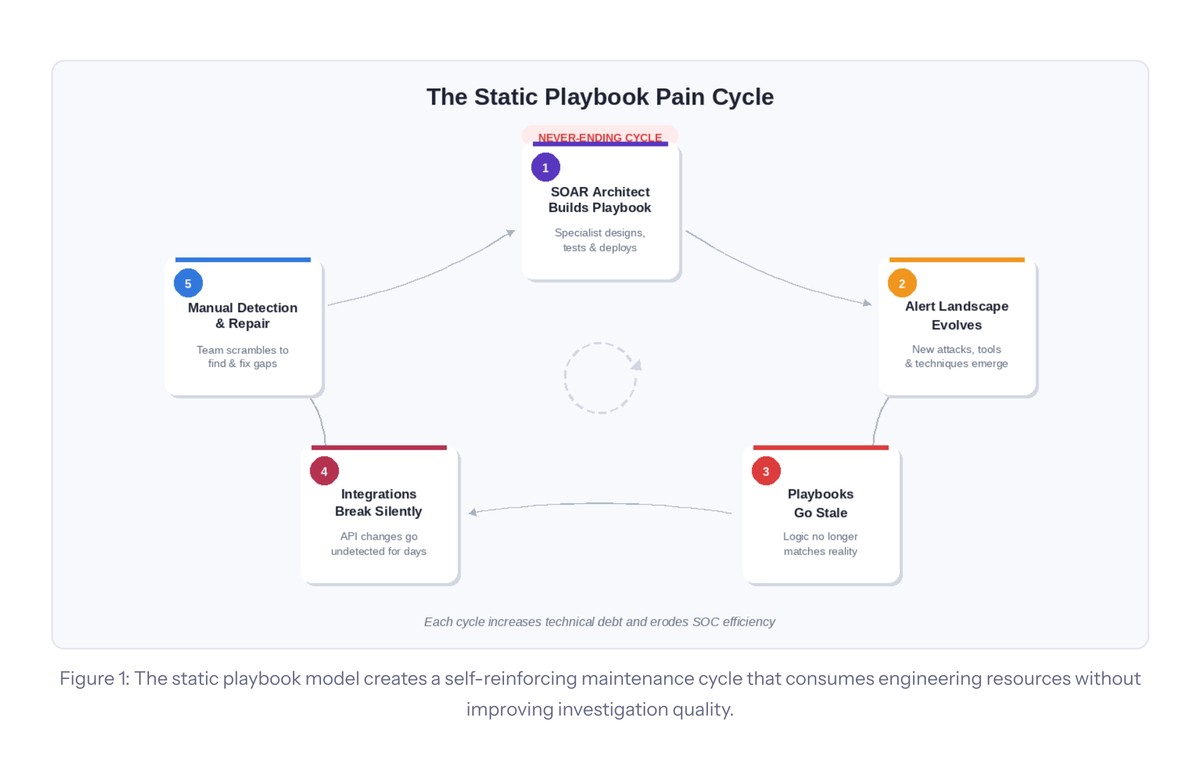

Today, most security teams find themselves trapped in a maintenance cycle that consumes more engineering resources every quarter without meaningfully improving investigation quality. The playbooks keep growing. The architects keep leaving. The integrations keep breaking. And the L1 analysts running the SOC at 2 AM still don’t get the investigative guidance they need.

The limitation is structural, baked into the architecture itself. A better UI won’t fix it.

The Five Fractures in the Static Playbook Model

Security leaders evaluating their next SOAR investment should be honest about what’s actually happening inside their SOC. The static playbook model is fracturing along five predictable lines.

SOAR architect dependency is the most obvious. Every playbook requires a specialist to design, build, test, and maintain it. That role is scarce, expensive, and creates an acute staffing bottleneck. When the architect leaves, institutional knowledge walks out the door.

Playbook sprawl is the second. A mature SOC may operate hundreds of playbooks, each requiring ongoing updates as threats, tools, and procedures change. This maintenance burden grows linearly and routinely outpaces the team’s capacity to manage it.

Static logic in a dynamic threat landscape is the third. A phishing playbook runs the same investigation whether the target is an intern or the CFO, whether the payload is known malware or a novel zero-day. Context doesn’t reach the investigation because the investigation was designed without it.

Silent integration failures are the fourth. When a vendor updates their API, dependent playbooks fail silently. Alerts queue, automation stops, and the break is often discovered hours or days later.

And the L1 analyst gap is the fifth. Static playbooks are designed by experienced engineers but executed in environments staffed by junior analysts. When an analyst needs to deviate from prescribed steps, they often lack the investigative experience to proceed effectively.

The playbook model creates a self-reinforcing maintenance cycle: build, maintain, break, detect, repair, repeat. Each turn of the cycle increases technical debt without improving investigation quality.

Why AI Copilots and Multi-Agent Systems Don’t Fix This

Across the SOAR market, vendors are responding with a remarkably uniform strategy: integrating general-purpose LLMs into their existing playbook platforms. Type a question, get an answer. Describe a workflow in plain English, get a draft playbook. Some vendors have gone further, introducing multi-agent architectures that coordinate specialized AI agents for investigation, remediation, and case management.

These are genuine productivity improvements, and they shouldn’t be dismissed. Faster playbook authoring, more accessible data querying, and a lower technical barrier for less experienced team members are real benefits.

The underlying operational model stays the same, though.

An AI copilot still requires humans to design investigation logic. It helps you build the same static playbooks faster—it still can’t perform attack path discovery, autonomously trace lateral movement across your security stack, generate contextual playbooks tailored to the specific incident, fix broken integrations, or tell an L1 analyst what questions to ask. The ceiling remains.

Multi-Agent Complexity: The New Playbook Sprawl

Multi-agent architectures deserve special scrutiny because they’re being marketed as the next evolution beyond static playbooks. The premise is appealing: instead of one monolithic system, coordinate a fleet of specialized agents that investigate, remediate, and manage cases independently.

In practice, multi-agent systems introduce a distinct category of engineering burden that mirrors the playbook problem they claim to solve.

Where a traditional deployment requires maintaining hundreds of static playbooks, a multi-agent platform requires maintaining a portfolio of specialized agents, each with its own prompt engineering, tool configurations, RAG knowledge bases, and autonomy boundaries. An investigation agent, a triage agent, a remediation agent, and a case management agent may each require independent tuning, testing, and updating. The operational burden shifts from workflow logic to agent configuration.

The hidden costs of multi-agent SOAR:

- Agent sprawl replaces playbook sprawl, with each agent requiring its own prompt engineering, RAG pipelines, and tool configs

- Cascading failures across agent chains are harder to diagnose than a broken playbook step, because each agent’s reasoning is non-deterministic

- Threat landscape updates require per-agent prompt and RAG maintenance, creating a maintenance lifecycle for every agent

- A new staffing bottleneck emerges: someone who understands prompt engineering, LLM behavior, RAG design, agent orchestration, and cybersecurity operations — arguably scarcer than the SOAR architect role it replaces

- Non-deterministic outputs break traditional testing, regression validation, and compliance audit trails

- Model provider dependency means a version upgrade by a third-party AI provider can silently alter agent behavior across your entire system

And here’s the risk that doesn’t get enough attention: unlike a playbook that fails explicitly when it encounters an unknown scenario, an agent powered by a general-purpose LLM may appear to handle a new threat confidently while producing incorrect or incomplete results. A silent failure mode that is arguably more dangerous than a playbook that simply stops.

What Actually Changes the Model

If the problem is structural, the fix has to be structural too.

Autonomous triage inverts the SOAR model entirely. Instead of humans designing investigation logic in advance, a purpose-trained cybersecurity AI ingests each alert, analyzes its full context, and generates a bespoke investigation and response at runtime. The intelligence moves from the playbook author to the platform itself.

On every incoming alert, an autonomous triage platform performs alert ingestion and context assembly across the full security stack, multi-dimensional attack path discovery with both vertical deep-dive into the alert’s origin tool and horizontal correlation across EDR, SIEM, cloud, identity, and network telemetry, contextual playbook generation tailored to the specific incident, and transparent reasoning where every step is described, editable, and auditable.

The implications are structural: AI-driven triage eliminates the need for SOAR architects, removes the playbook maintenance lifecycle, delivers L2-level investigation results at L1 cost, runs context-sensitive investigation on every alert, and provides self-healing integrations that eliminate the silent-failure problem.

The critical question is whether the AI architecture eliminates the maintenance burden entirely, or merely redistributes it into a form that’s newer, less understood, and potentially harder to manage.

Questions Worth Asking in Your Next Evaluation

If you’re evaluating SOAR platforms in 2026, there are a few questions that will quickly separate architectural approaches from cosmetic ones.

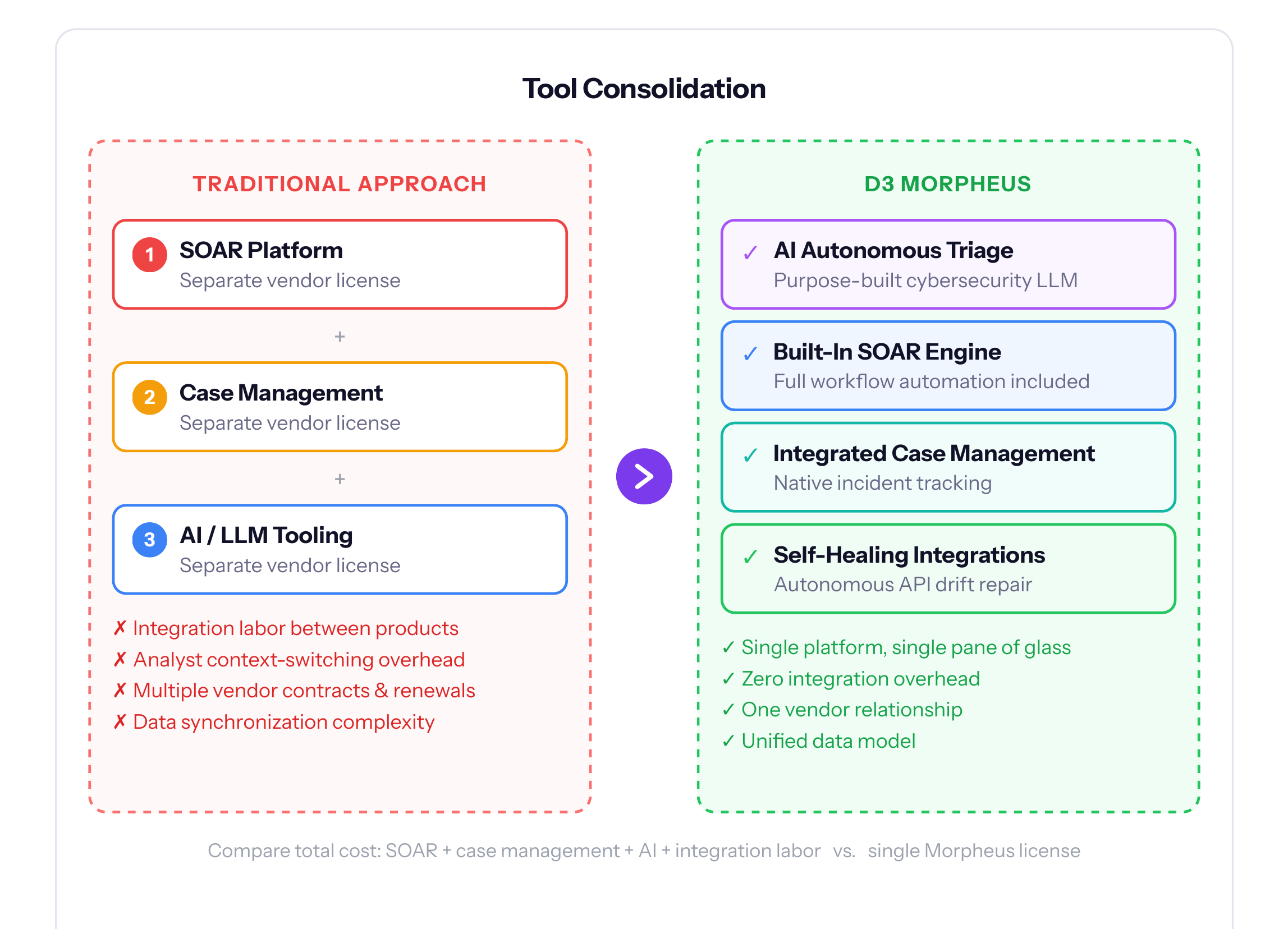

How many SOAR architects do you currently employ to build and maintain playbooks, and what happens when key personnel leave? How many of your playbooks are stale or outdated right now? When an alert fires at 2 AM, does your platform investigate it autonomously, or does it wait for a human? Does your current platform deliver L2-level investigation results to L1 analysts? How many separate products do you operate for workflow automation, case management, and AI tooling? And if the market moves to AI-driven autonomous triage over the next two to three years, can your current platform make that transition, or will you need to replace it entirely?

These aren’t rhetorical. They’re the questions that reveal whether your current approach is scaling with your threat landscape or falling further behind every quarter.

See Autonomous Triage in Action

Request a live demonstration of D3 Morpheus using alert data representative of your environment, including attack path discovery, contextual playbook generation, and the analyst review experience.

Read the Full Resource: The SOAR Ceiling: Why Playbook Automation Has Hit Its Structural Limits

A comprehensive analysis of the five structural fractures in the static playbook model, why AI copilots and multi-agent architectures don’t solve them, and what autonomous triage means for the future of security operations.