Executive Summary

The Security Orchestration, Automation, and Response (SOAR) market is undergoing a fundamental architectural shift. For over a decade, SOAR platforms delivered value through static playbook automation — predefined workflows that execute consistent, repeatable response steps. That model worked when threats were predictable and security stacks were simpler. Today, it has reached its structural ceiling.

Static playbooks cannot adapt to novel attack patterns in real time. They require expensive, scarce SOAR architects to build and maintain. They break silently when vendor APIs change. And they deliver L1-level investigation outputs to analysts who increasingly need L2-depth results. Adding a natural language interface to a static playbook generator — the approach most vendors are taking — makes these problems more accessible to query but does not eliminate them.

A fundamentally different approach has emerged: AI-driven autonomous triage. In this model, a purpose-trained cybersecurity LLM performs attack path discovery, generates contextual playbooks from scratch at runtime, and self-heals integrations — on every incoming alert, without human initiation.

D3 Morpheus AI is the first platform to fully operationalize this model. Its purpose-built cybersecurity LLM is customer-expandable, fully transparent, and auditable at every step. Morpheus also includes a complete built-in SOAR engine, enabling organizations to run static playbooks alongside autonomous AI triage — and transition at their own pace.

<2 min

L2-quality alert triage, per alert, 24/7

0

SOAR architects required for AI-driven triage

3-in-1

AI triage + SOAR engine + case management unified

This whitepaper examines where the static playbook model breaks down, why natural language overlays and multi-agent architectures fail to resolve the core structural issues, and how autonomous triage delivers a fundamentally better operational outcome for enterprise SOC teams.

Table of Contents

- The Structural Limitations of Static Playbooks

- The Natural Language Overlay: What It Does and Does Not Change

- The Autonomous Triage Model

- D3 Morpheus: How Autonomous Triage Works in Practice

- Capability Summary

- Questions for Your Evaluation

- Next Steps

1. The Structural Limitations of Static Playbooks

Static playbooks emerged as the answer to a real problem: manual incident response was too slow, too inconsistent, and too dependent on individual analyst skill. By encoding investigation and response steps into automated workflows, SOAR platforms delivered measurable improvements in response time and analyst efficiency.

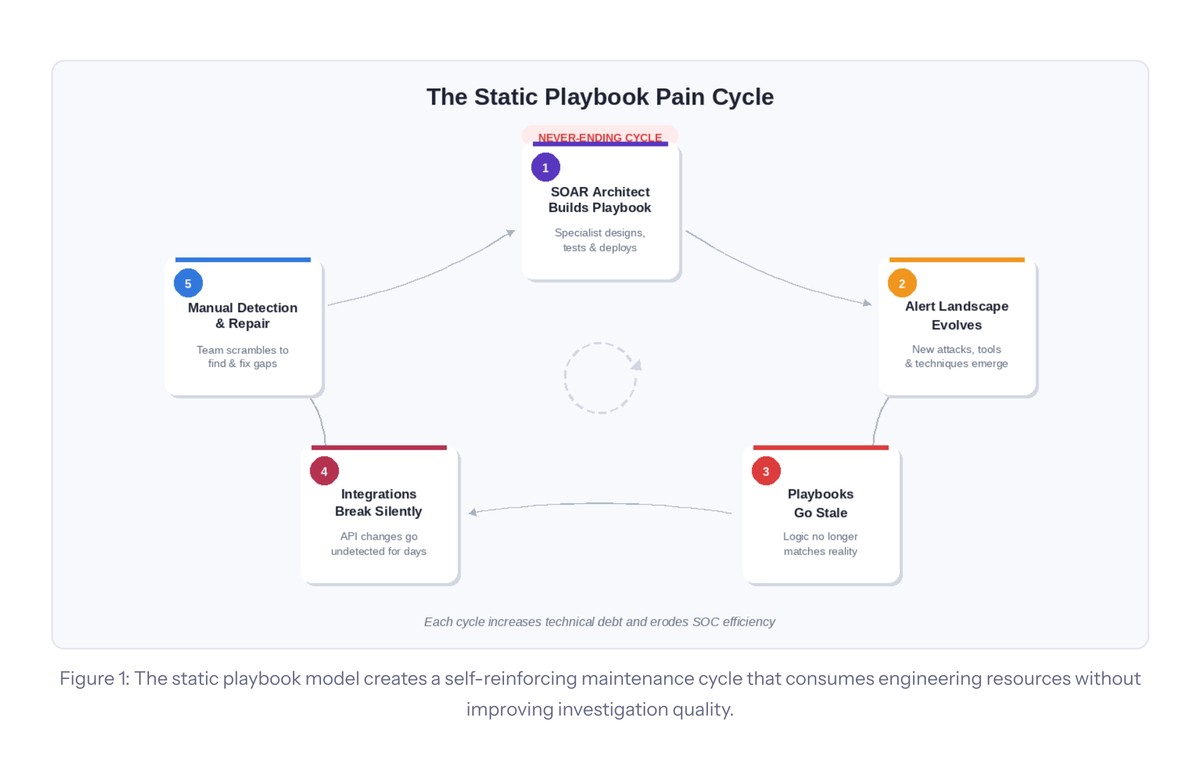

However, this model carries inherent limitations that become more acute as organizations scale:

SOAR architect dependency.

Every playbook requires a specialist to design, build, test, and maintain it. This role is scarce, expensive, and creates a single point of failure. When that specialist leaves or is unavailable, playbook development stalls.

Playbook sprawl and maintenance burden.

A mature SOC may operate hundreds of playbooks, each requiring ongoing updates as the threat landscape, tool stack, and vendor APIs evolve. Maintenance consumes engineering cycles that could otherwise improve detection coverage.

Static logic in a dynamic threat landscape.

Pre-built playbooks execute the same steps regardless of context. A phishing playbook runs the same investigation whether the alert is a credential harvest targeting the CFO or a routine bulk-send flagged by an email gateway — missing the nuance that determines actual severity and appropriate response.

Silent integration failures.

When a vendor updates their API, playbooks that depend on those integrations fail silently. Alerts queue, automation stops, and the failure may go undetected for days — during which threats are not being investigated.

The L1 analyst gap.

Static playbooks are designed by experienced engineers but executed in environments staffed by junior L1 analysts. When the analyst encounters a result the playbook didn’t anticipate, or a step that requires judgment, the automation stops and the ticket escalates — consuming exactly the senior analyst time the platform was meant to protect.

2. The Natural Language Overlay: What It Does and Does Not Change

Across the SOAR market, vendors are responding to the rise of AI with a remarkably uniform strategy: integrating general-purpose LLMs into their existing static playbook platforms. The result is a natural language interface layered on top of an unchanged operational model — analysts can now query their SOAR in plain English, but the underlying architecture remains the same.

2.1 What the Overlay Provides

Natural language overlays deliver genuine quality-of-life improvements within the static playbook model. These include faster playbook authoring, more accessible query interfaces for analysts, improved onboarding experiences for new SOAR users, and conversational interfaces for routine lookups. These are real benefits that reduce friction for teams already committed to the static playbook model.

2.2 What the Overlay Does Not Change

Despite the addition of LLM-powered interfaces, the underlying operational model remains unchanged. Specifically, a natural language overlay does not:

- Perform attack path discovery. The AI does not autonomously trace lateral movement across the security stack. It can query data when asked, but it does not proactively investigate each alert end-to-end without human initiation.

- Generate contextual playbooks. The investigation logic still resides in prebuilt, manually maintained workflows. The AI may help build a playbook faster, but that playbook is still static once deployed.

- Eliminate the SOAR architect dependency. Playbooks still need to be designed, tested, versioned, and maintained by specialists. Making playbook authoring easier in natural language reduces friction, but the maintenance lifecycle remains.

- Provide investigative guidance to L1 analysts. A natural language tool gives an L1 analyst the ability to ask questions faster, but it does not tell them what questions to ask, interpret ambiguous results, or make triage judgments on their behalf.

- Fix broken integrations. When a vendor updates their API and a playbook step fails silently, the LLM overlay has no mechanism to detect the failure, diagnose the cause, and generate a corrected integration — it simply reflects the failure back to the user.

The critical distinction for buyers: adding a natural language interface to a static playbook generator makes the existing model more accessible. It does not address the structural constraints that limit how far that model can scale.

2.3 The Copilot and Multi-Agent Approach in Practice

The copilot model illustrates both genuine strengths and structural boundaries. As a natural language interface to an existing workflow builder, AI copilots reduce time-to-playbook and lower the barrier for less technical analysts to query their environment. These are real workflow improvements. However, neither copilots nor multi-agent orchestration systems change the fundamental operational model when the underlying AI relies on general-purpose foundation models not trained specifically on cybersecurity triage tasks.

Critically, these platforms do not perform attack path discovery. When an alert arrives, there is no autonomous correlation across EDR, SIEM, identity, and network telemetry — only the human-initiated queries or static workflows that existed before the AI layer was added. The investigation depth is bounded by the general-purpose model’s training, not by security-domain expertise.

2.4 The Hidden Cost of Multi-Agent Complexity

Multi-agent architectures are marketed as the next evolution beyond static playbooks, but security leaders should examine whether they actually eliminate operational complexity — or simply redistribute it. Each agent needs independent prompt engineering, RAG configuration, and tool-calling setup. Where a traditional SOAR requires maintaining hundreds of static playbooks, a multi-agent platform requires maintaining hundreds of agent prompts, each with its own failure mode, testing requirement, and non-deterministic behavior.

3. The Autonomous Triage Model

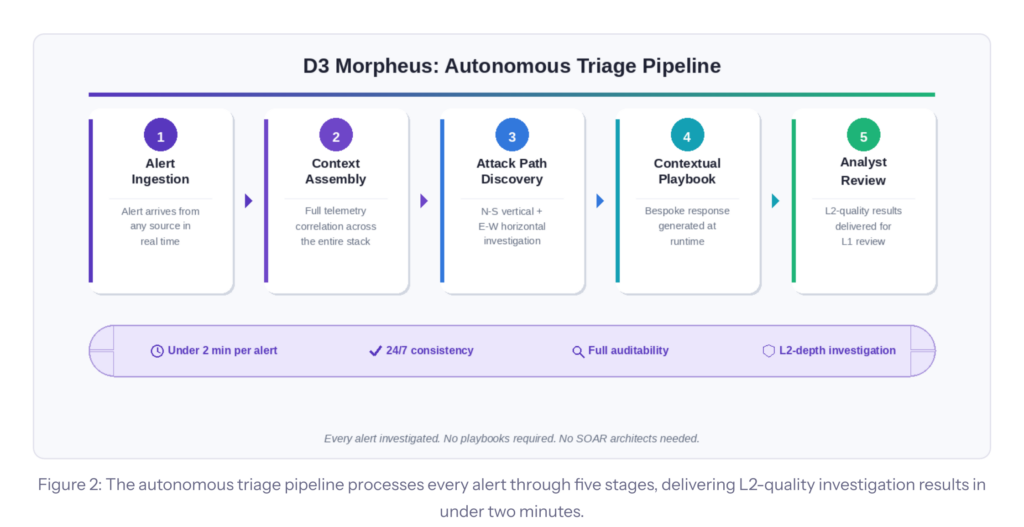

Autonomous triage inverts the SOAR model. Instead of humans designing the investigation logic in advance, a purpose-trained AI ingests each alert, analyzes it against the full security stack context, and delivers L2-level investigation results — without requiring a human to initiate the process or a playbook to be pre-built.

On every incoming alert, an autonomous triage platform performs alert ingestion and context assembly across the full security stack; multi-dimensional attack path analysis (vertical North-South deep inspection and horizontal East-West lateral movement tracing); contextual playbook generation bespoke to that alert’s specific context; and automated response execution with full auditability.

Alert Ingestion

Alert arrives from any source in real time

Context Assembly

Full telemetry correlation across the entire stack

Attack Path Discovery

N-S vertical + E-W horizontal investigation

Contextual Playbook

Bespoke response generated at runtime

Analyst Review

L2-quality results delivered for L1 review

The implications are structural, not incremental: no SOAR architects required for AI-driven triage, no playbook maintenance lifecycle, L2-level triage depth delivered to every L1 analyst, self-healing integrations that detect and repair API drift in real time, and 24/7 investigation coverage without human initiation.

4. D3 Morpheus: How Autonomous Triage Works in Practice

D3 Morpheus AI is the first platform to fully operationalize the autonomous triage model for enterprise security operations.

4.1 Purpose-Built Cybersecurity LLM

At its core is a cybersecurity triage LLM developed over 24 months by a team of 60 specialists — red teamers, data scientists, AI engineers, and SOC analysts. The model is trained exclusively on security operations data: alerts, attack paths, investigation results, and remediation actions. It does not rely on a general-purpose foundation model as its reasoning engine for triage decisions.

4.2 Customer-Expandable LLM

Customers can expand and customize the LLM for their specific environment, threat landscape, and SOC procedures. The result is a proprietary, customer-specific intelligence layer that improves with each investigation — learning the organization’s tool stack, escalation thresholds, and analyst preferences over time.

4.3 Attack Path Discovery on Every Alert

On every incoming alert, Morpheus AI performs multi-dimensional correlation: vertical (North-South) deep inspection into the alert’s origin tool and horizontal (East-West) lateral movement tracing across EDR, SIEM, identity, and network telemetry. This delivers the attack path context that determines true severity — without analyst initiation.

4.4 Contextual Playbook Generation

Because Morpheus AI understands the alert context, the customer’s tool stack, and the organization’s SOC preferences, it generates a bespoke playbook for each alert at runtime. No pre-built workflow is required. Each playbook reflects the specific threat, environment, and response constraints relevant to that case.

4.5 Self-Healing Integrations

Morpheus AI continuously monitors the behavior and response schemas of connected integrations, detects API drift and schema changes in real time, and generates corrected integration logic autonomously — eliminating the silent failure mode that plagues static playbook deployments. Integration health is maintained without engineer intervention.

4.6 Built-In SOAR: Start Static, Go Autonomous

Morpheus AI includes a full built-in SOAR engine alongside its autonomous AI capabilities. Organizations can run both models simultaneously: static playbooks for well-understood, high-volume scenarios and autonomous AI triage for novel, complex, or high-severity threats. This dual-mode architecture lets organizations migrate at their own pace — starting with the SOAR they know, adopting autonomous triage incrementally as confidence builds.

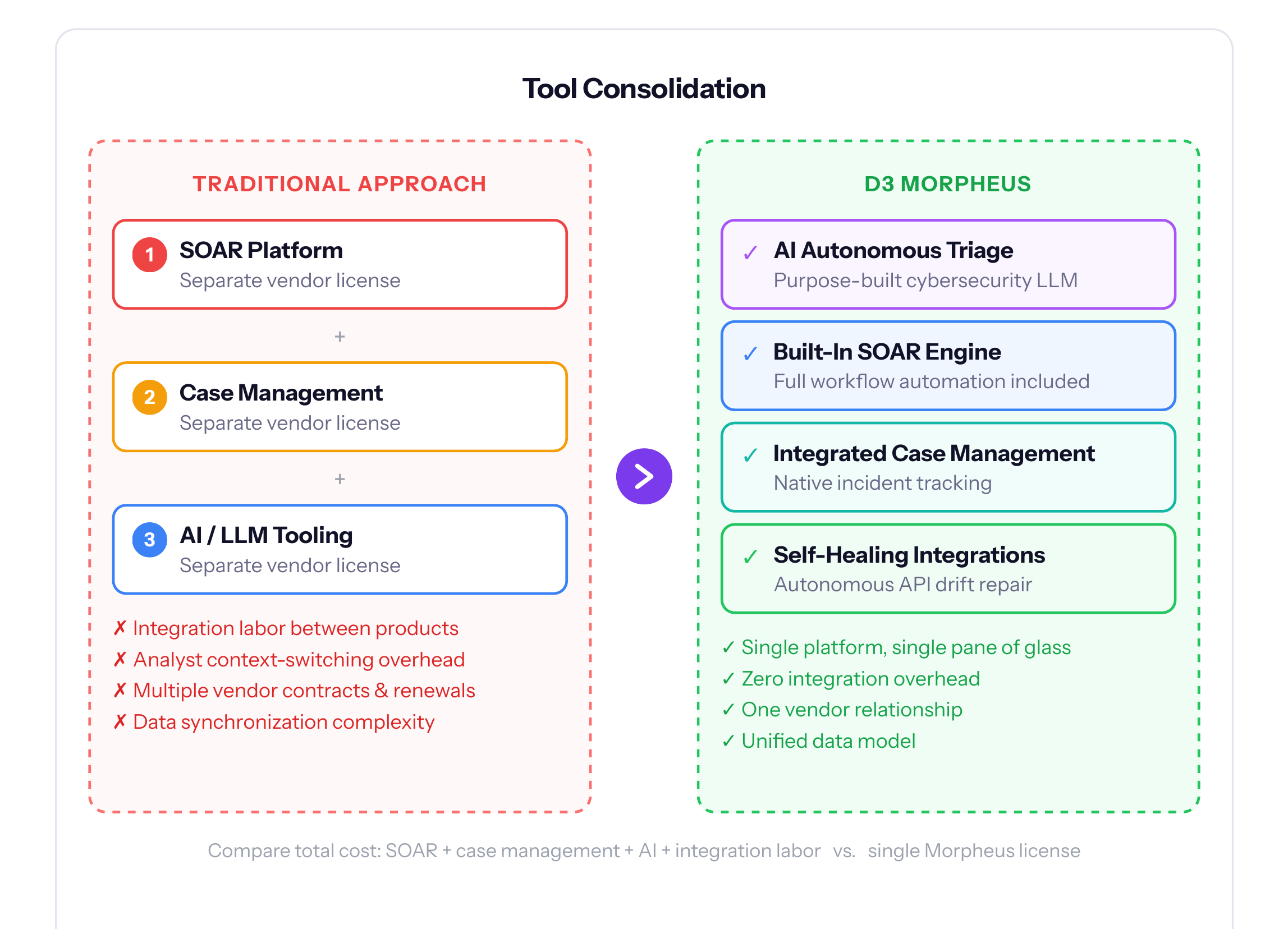

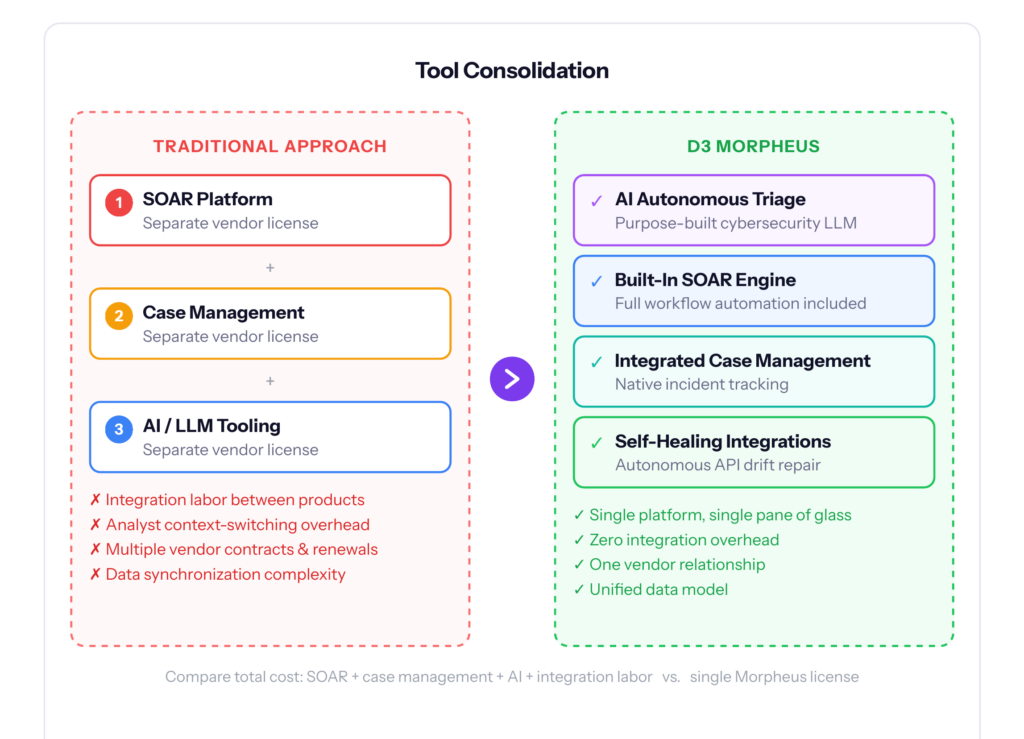

4.7 Tool Consolidation

Morpheus AI consolidates AI-driven autonomous triage, a full-featured traditional SOAR engine, and integrated case management into a single platform. This eliminates the integration overhead, data synchronization complexity, and analyst context-switching that come with maintaining three separate products.

4.8 Governance, Deployment, and Enterprise Scale

Morpheus AI is architected for Fortune 100-scale environments and supports Git-based change control, open YAML playbook format, LLM swappability, fully isolated on-premises deployment, and role-based access controls across all AI and workflow functions. Every AI decision is logged, explained, and auditable — meeting enterprise compliance requirements without sacrificing operational speed.

4.9 LLM Security, Privacy, and Model Configuration

As LLMs become embedded in security operations, the security posture of the AI layer itself becomes a critical evaluation criterion. The choice between a purpose-built cybersecurity LLM and a general-purpose foundation model relay has material consequences for data privacy, adversarial robustness, and governance:

Model Architecture: Purpose-Built vs. Foundation Model Relay

Copilot and multi-agent platforms typically relay queries to general-purpose foundation models (such as AWS Bedrock models, OpenAI, or Google) via API. Morpheus AI uses a domain-specific model trained entirely on cybersecurity data — with constrained output schemas that prevent the model from generating actions outside defined security operations parameters.

Data Privacy and Residency

Some copilot-augmented platforms host AI features on managed cloud services with commitments that data is not used for model training. Morpheus AI supports fully isolated on-premises deployment, eliminating third-party model vendor dependency entirely. Alert data, investigation context, and case evidence never leave the customer’s environment.

Prompt Injection and Adversarial Risk

Any platform that exposes an LLM to untrusted input — alert data, email bodies, log entries — faces prompt injection risk. Morpheus AI’s domain-specific model and constrained output schemas provide structural guardrails that general-purpose models lack. Multi-agent architectures expand this attack surface: each agent’s tool-calling and RAG pipeline represents an additional injection vector.

Model Governance and Configurability

Morpheus AI provides tiered autonomy control: fully deterministic (no AI involvement), AI-assisted (human-in-the-loop), and fully autonomous modes — configurable per alert type, severity threshold, or organizational policy. The model is swappable, and all AI outputs are explainable at the step level, enabling compliance review without sacrificing speed.

5. Capability Summary

The following table compares D3 Morpheus AI against copilot-augmented and multi-agent SOAR platforms. Category-level comparison; verify specific vendor capabilities against current product documentation.

| Capability | D3 Morpheus AI | Copilot / Multi-Agent SOAR |

|---|---|---|

| Cybersecurity LLM | Purpose-built; customer-expandable | Generic foundation models (AWS Bedrock etc.) used as copilot tools; BYOAI available |

| Attack Path Discovery | Autonomous on every alert; L2-level depth | Multi-agent task decomp.; depth bounded by general-purpose model training |

| Playbook Generation | Contextual; generated at runtime per alert | Static; manually built and maintained |

| Self-Healing Integrations | Autonomous API drift detection and repair | Manual detection and repair required |

| Triage Level | L2-level investigation results to every L1 analyst | L1 query assistance; multi-agent execution within guardrails |

| Built-In SOAR | Full SOAR engine + autonomous AI (dual mode) | Static playbook automation |

| Case Management | Integrated natively in platform | Typically a separate product |

| LLM Transparency | Every step described, editable, auditable | Standard API responses; some vendors offer audit logging & RBAC |

| LLM Customization | Customer-expandable domain-specific model | BYOAI provider selection; RAG contextual knowledge; no domain fine-tuning |

| SOAR Architect Req. | Not required for AI-driven triage | Required for complex workflow design |

| Agent Maintenance | Purpose-built LLM evolves; no per-agent upkeep | Each agent requires independent prompt, RAG, and tool maintenance |

| Future-Proofing | Start static, migrate to fully autonomous | Static model with AI convenience layer added |

| Tool Consolidation | AI triage + SOAR + case management unified | Workflow automation only; additional products needed |

| LLM Data Privacy | Fully isolated on-premises; no third-party model vendor | Cloud-hosted via third-party providers; BYOAI shifts privacy burden to customer |

| Adversarial Robustness | Domain guardrails; constrained schemas; purpose-trained | General-purpose guardrails; multi-agent tool-calling expands injection surface |

6. Questions for Your Evaluation

As you evaluate SOAR platforms, the following questions will help clarify which architecture best fits your organization:

Q1. How many SOAR architects or engineers do you currently employ to build and maintain playbooks? What is the annual cost of that function, and what happens to your automation coverage if that person leaves?

Q2. How many playbooks are you maintaining today, and how many are stale or outdated? What is the update cycle, and who owns it?

Q3. How much time does your team spend detecting and repairing broken integrations? How quickly are integration breaks typically discovered after a vendor API update?

Q4. When an alert fires at 2 AM, does your current platform investigate it autonomously, or does it wait for a human to initiate the investigation?

Q5. Does your current platform deliver L2-level investigation results to L1 analysts, or does investigation quality depend on the individual analyst’s experience?

Q6. How many separate products do you operate for workflow automation, case management, and AI tooling? What is the total cost of ownership across all of them, including integration maintenance?

Q7. If the market moves to AI-driven autonomous triage over the next two to three years, can your current platform make that transition — or will you need to re-platform entirely?

7. Next Steps

We recognize that evaluating a platform shift of this magnitude requires more than a capability comparison. D3 Security offers the following structured engagement paths for security leaders ready to move forward:

Personalized Platform Demonstration

Live demonstrations of Morpheus AI using alert data representative of your environment, including attack path discovery on realistic alert scenarios, contextual playbook generation in real time, and self-healing integration behavior. Not a canned demo — calibrated to your stack and threat profile.

Proof of Value (POV) Engagement

Deploy Morpheus AI against a defined subset of your alert stream. Measure triage accuracy and time-to-resolution against your current platform. D3 provides structured POV methodology, success criteria definition, and measurement frameworks to produce a rigorous, decision-grade comparison.

Total Cost of Ownership Analysis

A comprehensive TCO comparison that includes platform licensing, SOAR architect staffing costs, playbook maintenance labor, separate case management and AI tooling costs, and integration overhead. Most organizations find that tool consolidation alone produces material savings independent of the operational improvements.

Architecture and Migration Planning

For organizations with existing SOAR deployments, D3 provides architecture consultation to plan a migration path that minimizes disruption. The built-in SOAR engine allows existing playbooks to run in parallel while autonomous AI triage is adopted incrementally — protecting existing investment while building toward the autonomous model.